BIG DATA ANALYTICS

[R17A0528]

LECTURE NOTES

B.TECH IV YEAR – I SEM (R17)

(2020-2021)

MALLA REDDY

COLLEGE OF ENGINEERING & TECHNOLOGY

(Autonomous Institution – UGC, Govt. of India)

Recognized under 2(f) and 12 (B) of UGC ACT 1956

(Affiliated to JNTUH, Hyderabad, Approved by AICTE - Accredited by NBA & NAAC – ‘A’ Grade - ISO 9001:2015 Certified)

Maisammaguda, Dhulapally (Post Via. Hakimpet), Secunderabad – 500100, Telangana State, India

(R17A0528) BIG DATA ANALYTICS

UNIT I

INTRODUCTION TO BIG DATA AND ANALYTICS

Classification of Digital Data, Structured and Unstructured Data –

Introduction to Big Data: Characteristics – Evolution – Definition - Challenges with Big Data

- Other Characteristics of Data - Why Big Data - Traditional Business Intelligence versus Big

Data - Data Warehouse and Hadoop Environment Big Data Analytics: Classification of

Analytics – Challenges - Big Data Analytics important - Data Science - Data Scientist -

Terminologies used in Big Data Environments - Basically Available Soft State Eventual

Consistency - Top Analytics Tools

UNIT II

INTRODUCTION TO TECHNOLOGY LANDSCAPE

NoSQL, Comparison of SQL and NoSQL, Hadoop -RDBMS Versus Hadoop - Distributed

Computing Challenges – Hadoop Overview - Hadoop Distributed File System - Processing

Data with Hadoop - Managing Resources and Applications with Hadoop YARN -

Interacting with Hadoop Ecosystem

UNIT III

INTRODUCTION TO MONGODB AND MAPREDUCE PROGRAMMING

MongoDB: Why Mongo DB - Terms used in RDBMS and Mongo DB - Data Types -

MongoDB Query Language

MapReduce: Mapper – Reducer – Combiner – Partitioner – Searching – Sorting – Compression

UNIT IV

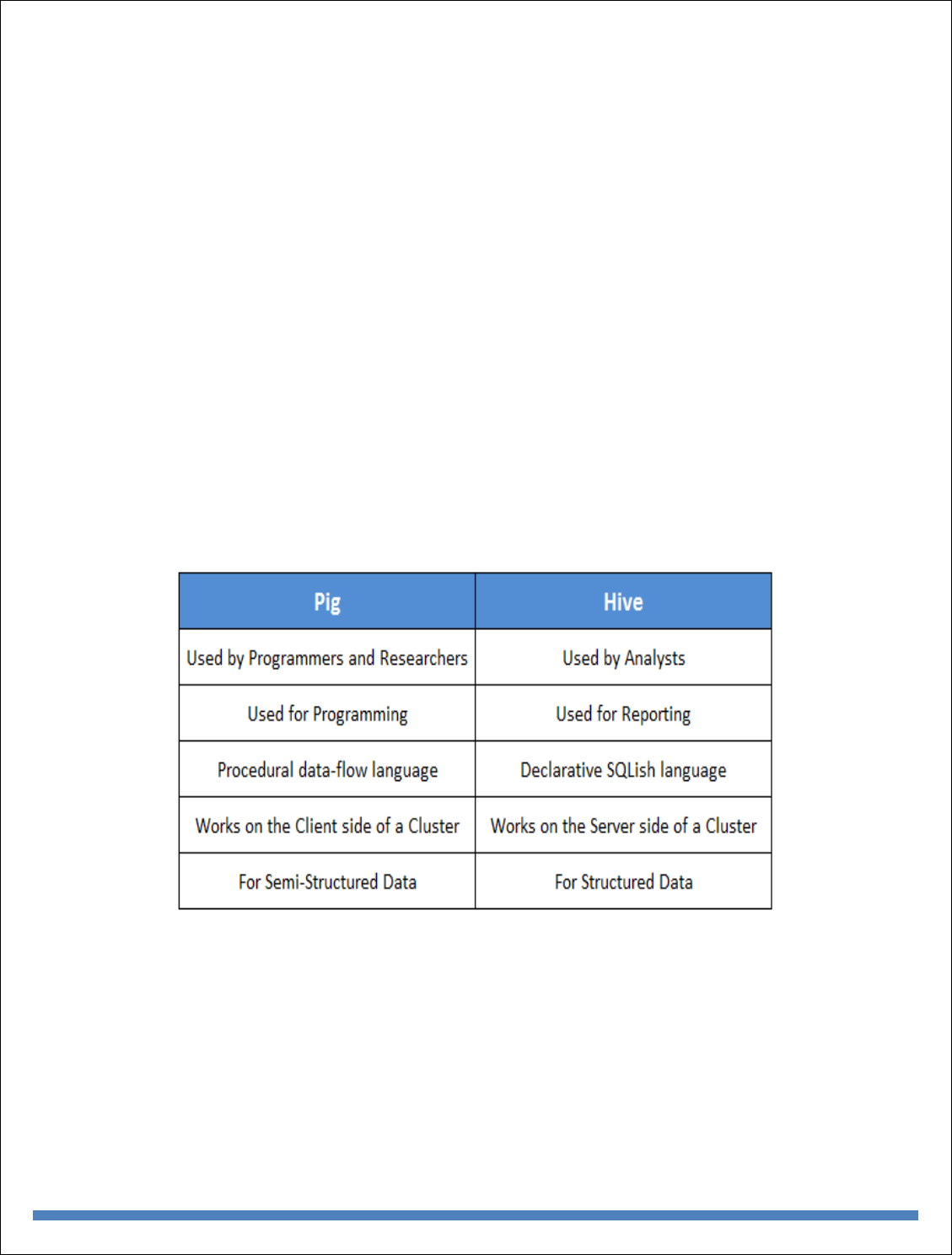

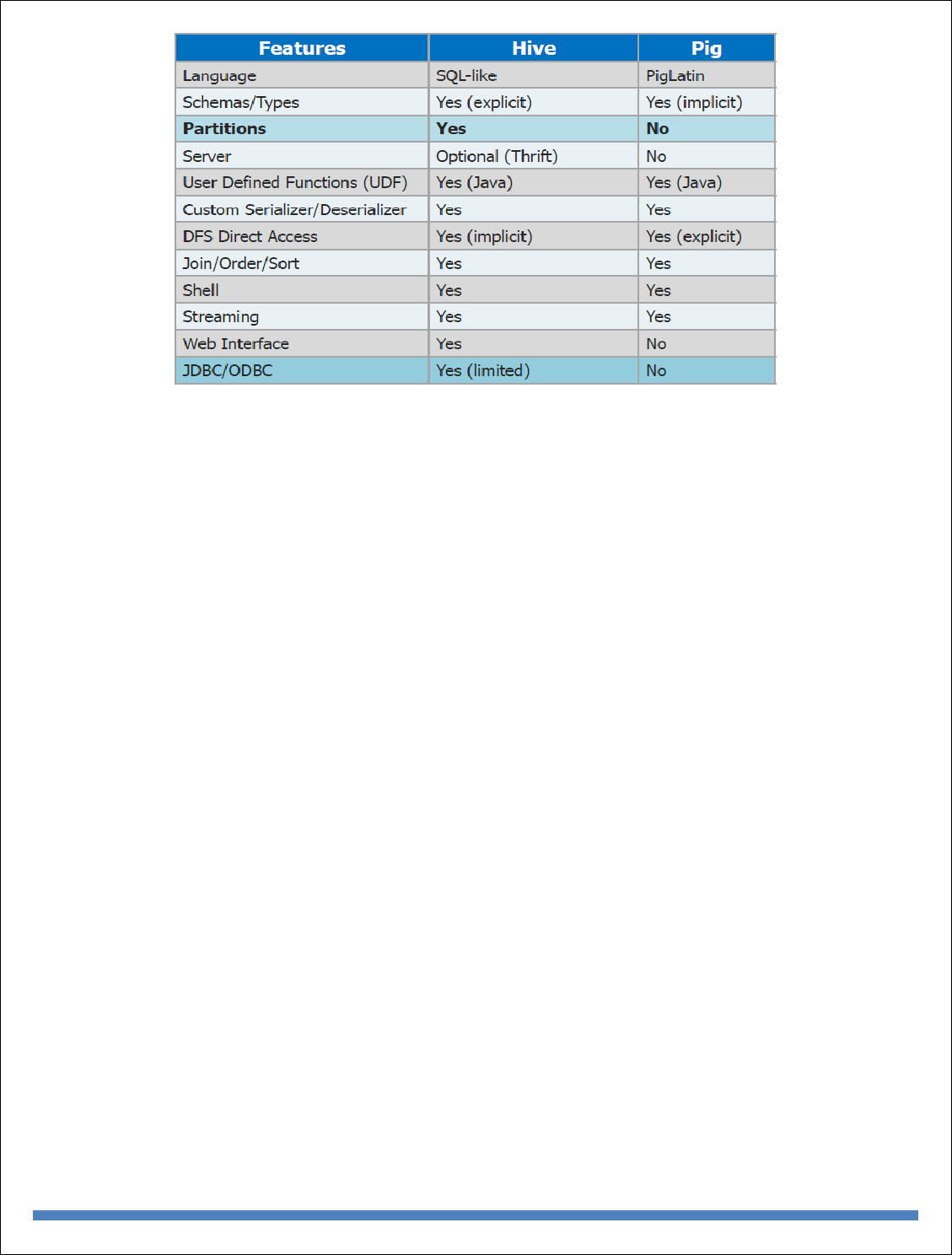

INTRODUCTION TO HIVE AND PIG

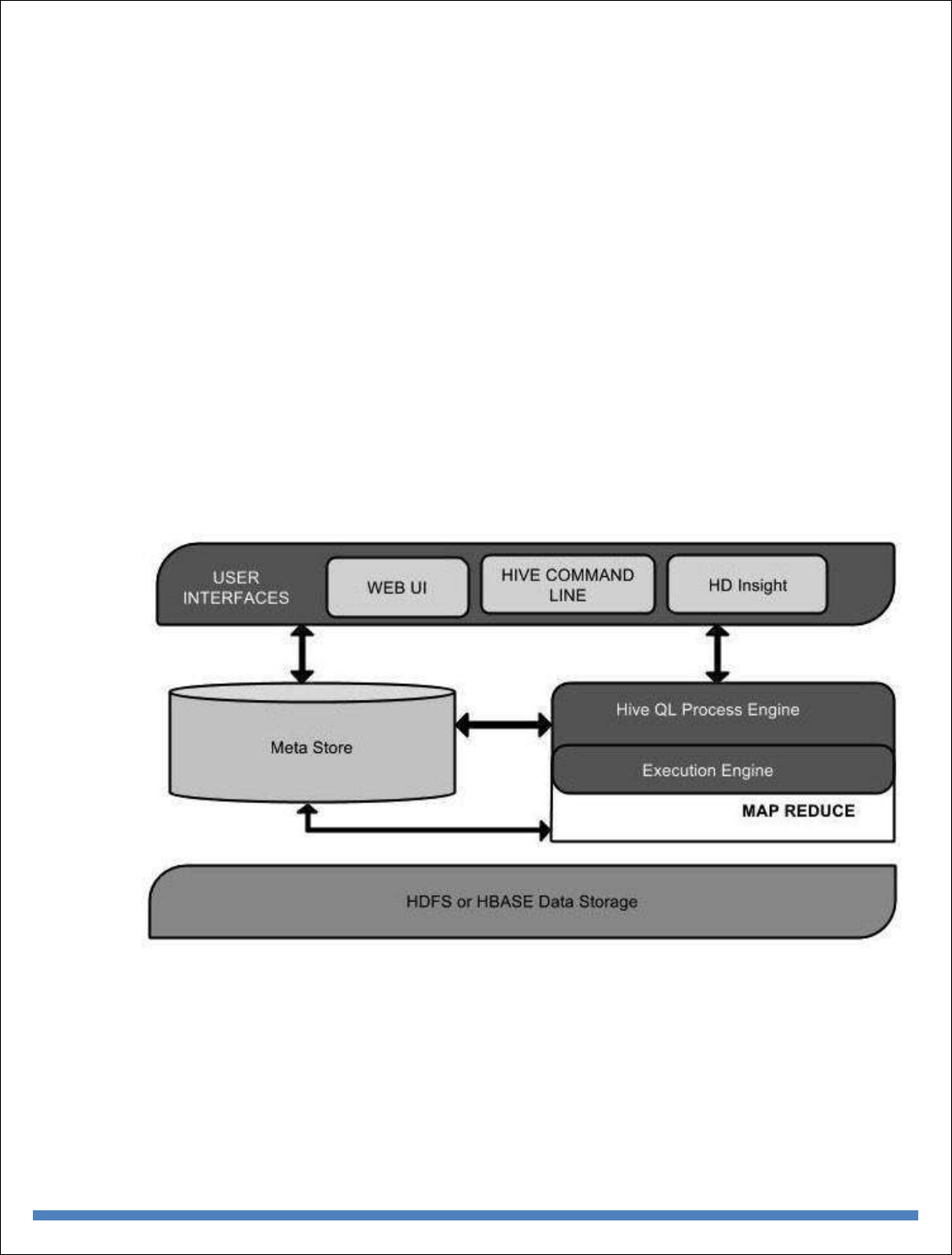

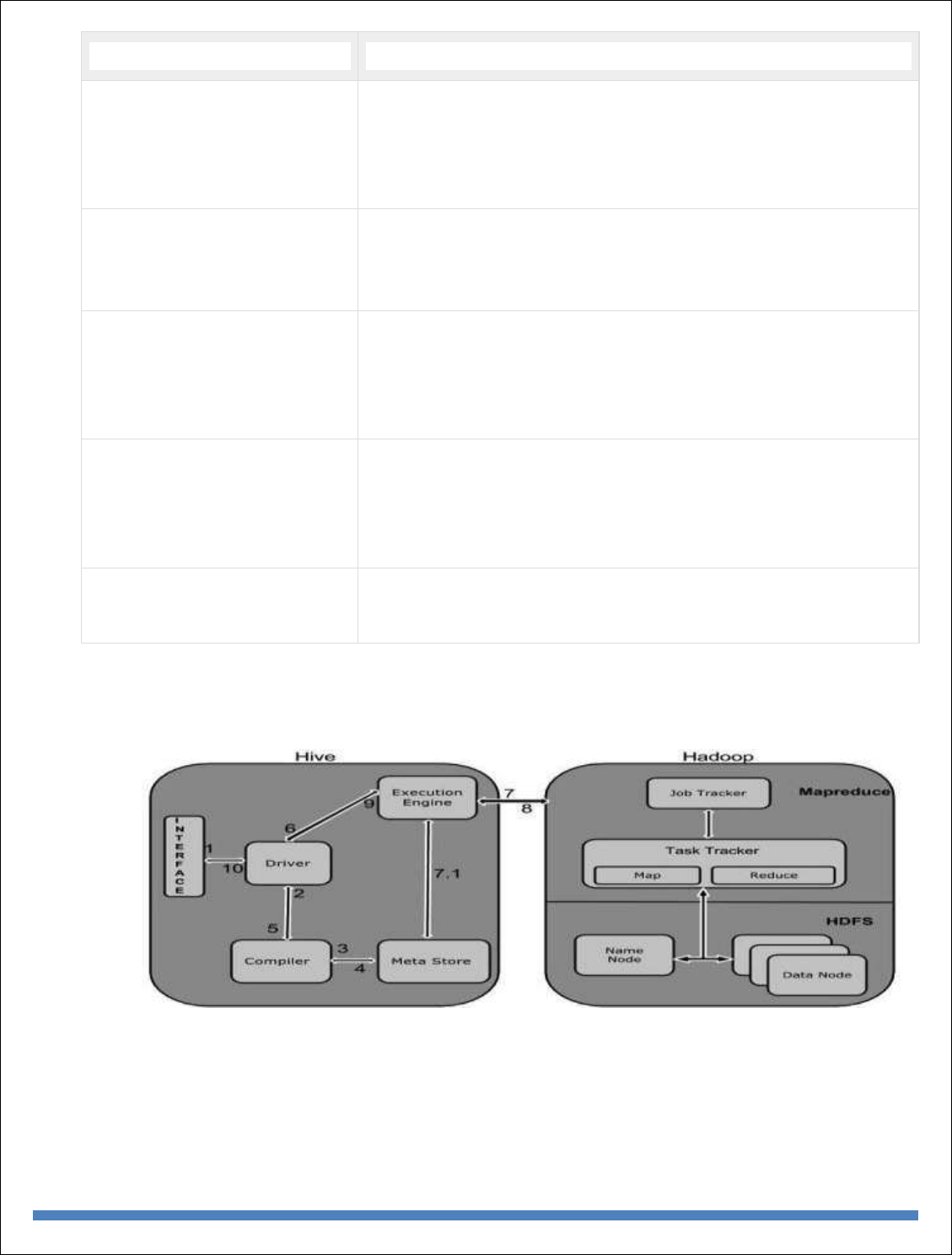

Hive: Introduction – Architecture - Data Types - File Formats - Hive Query Language

Statements – Partitions – Bucketing – Views - Sub- Query – Joins – Aggregations - Group by

and Having - RCFile Implementation - Hive User Defined Function - Serialization and

Deserialization. Pig: Introduction - Anatomy – Features – Philosophy - Use Case for Pig - Pig

Latin Overview - Pig Primitive Data Types - Running Pig - Execution Modes of Pig - HDFS

Commands - Relational Operators - Eval Function - Complex Data Types - Piggy Bank -

User-Defined Functions - Parameter Substitution - Diagnostic Operator - Word Count

Example using Pig - Pig at Yahoo! - Pig Versus Hive

UNIT V

INTRODUCTION TO DATA ANALYTICS WITH R

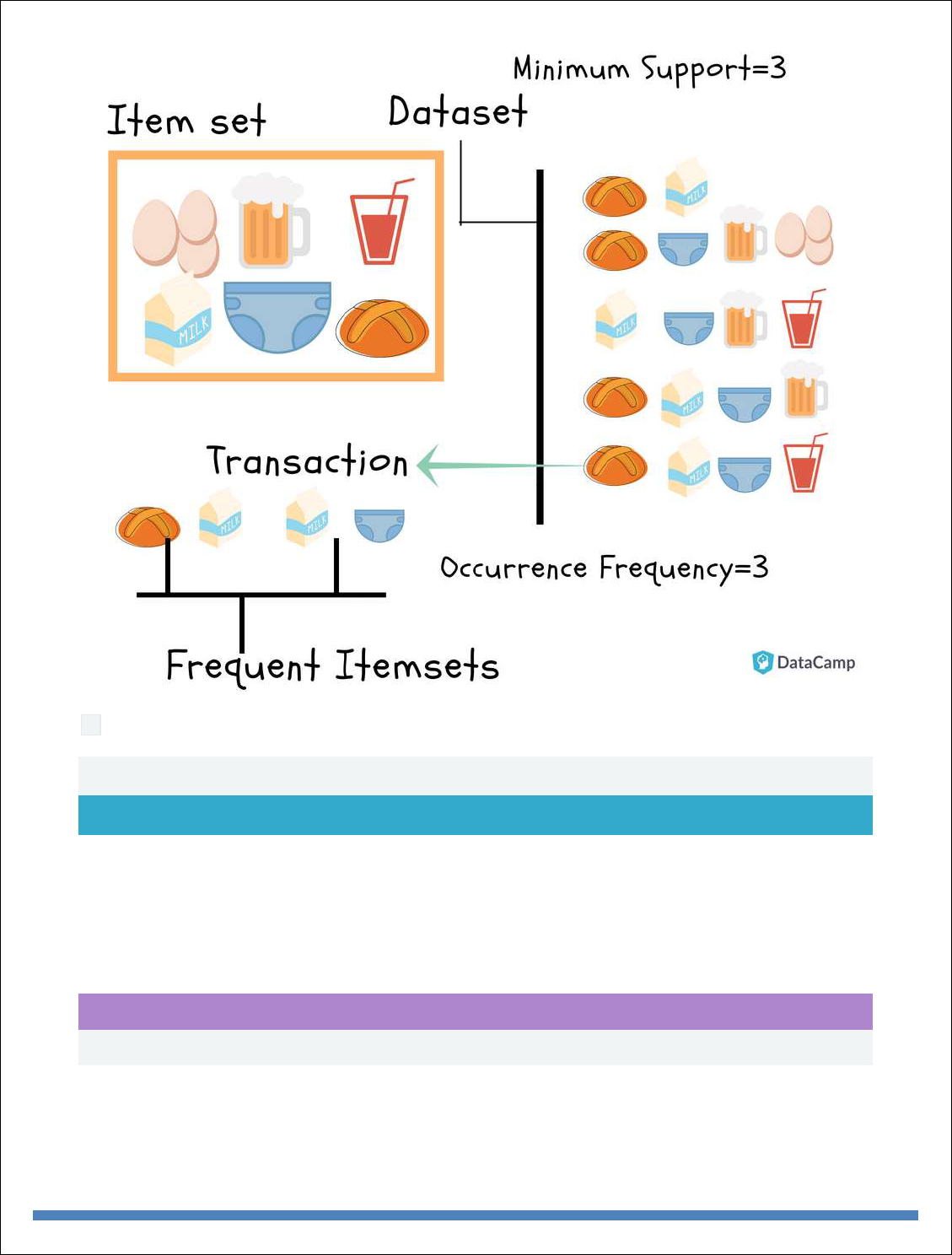

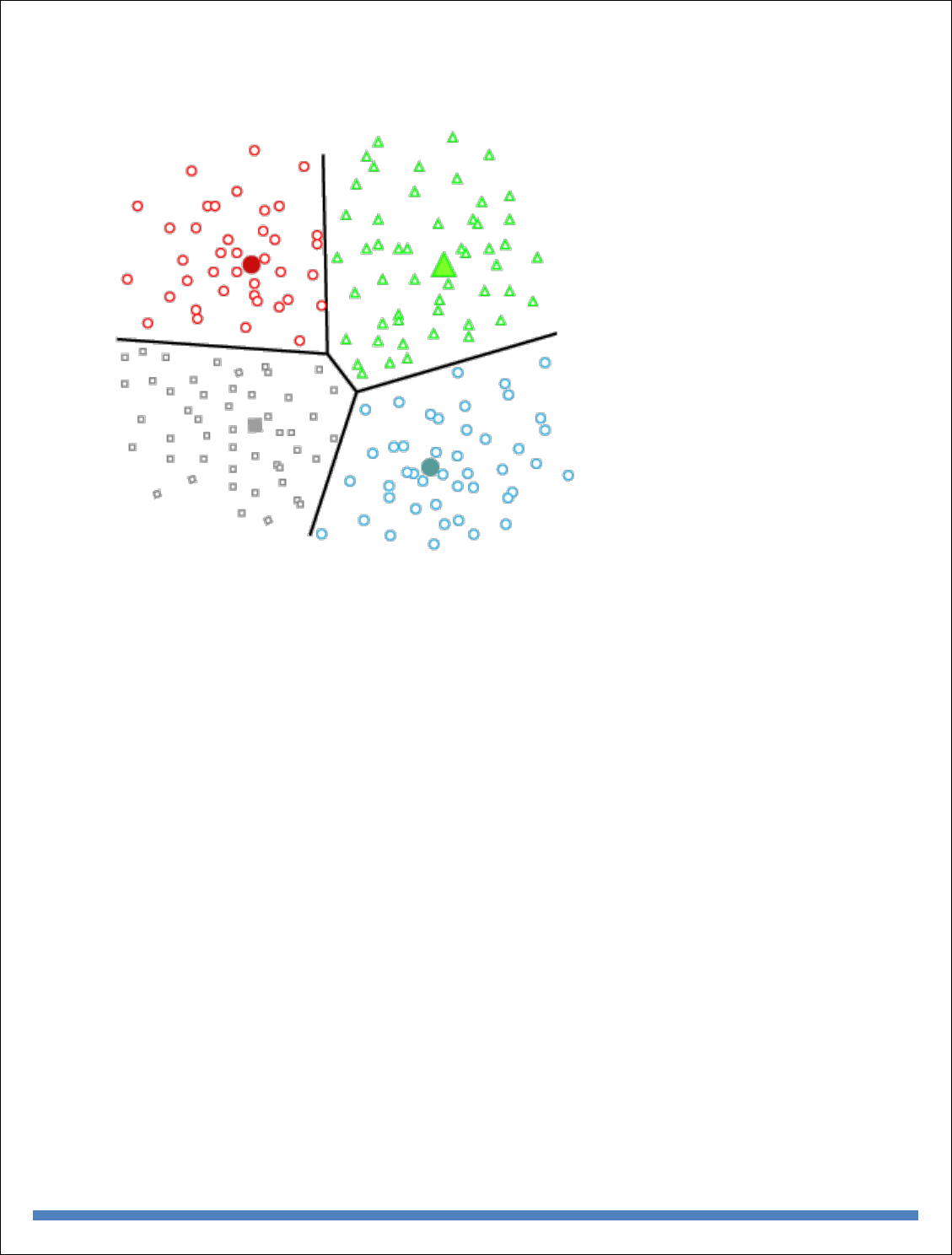

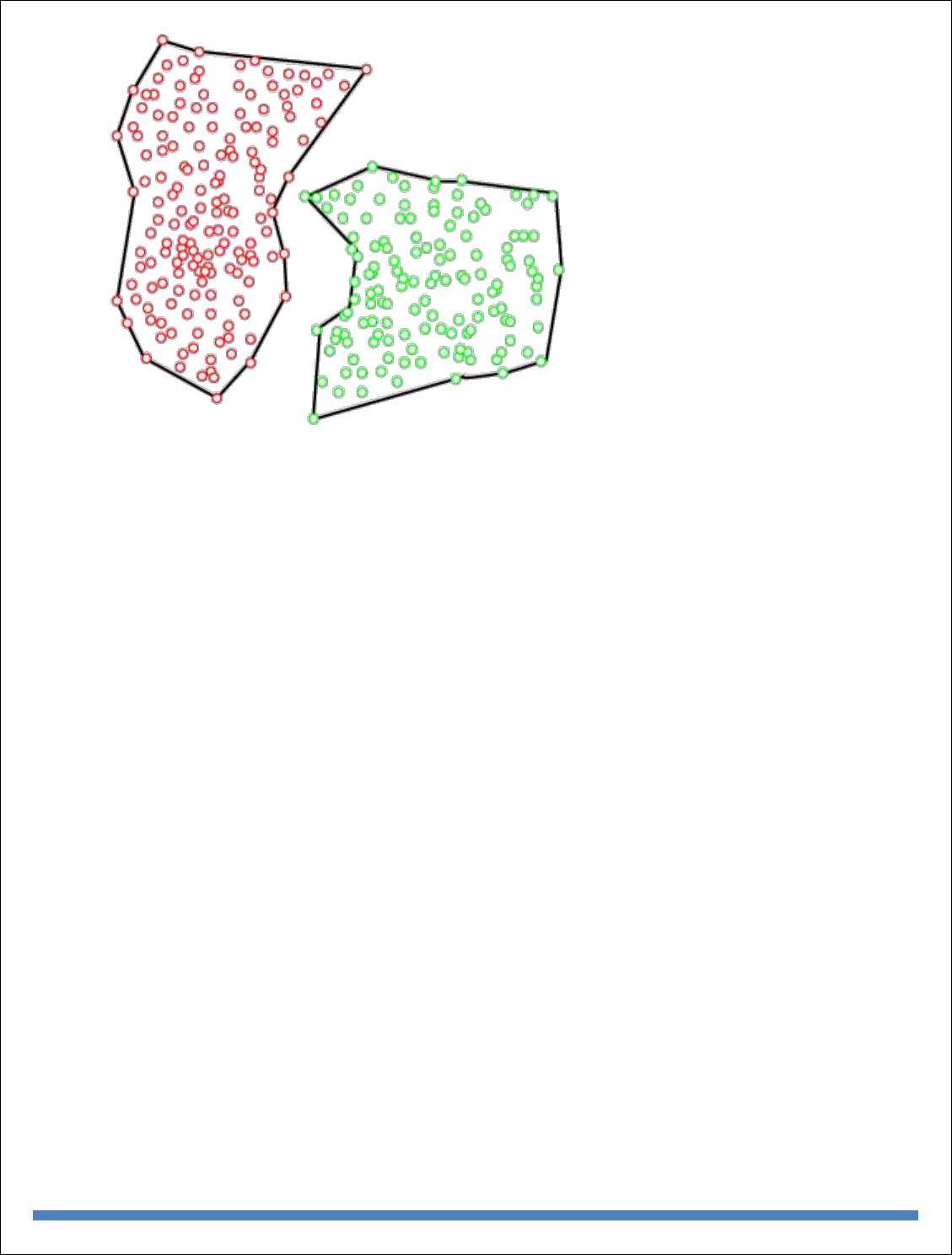

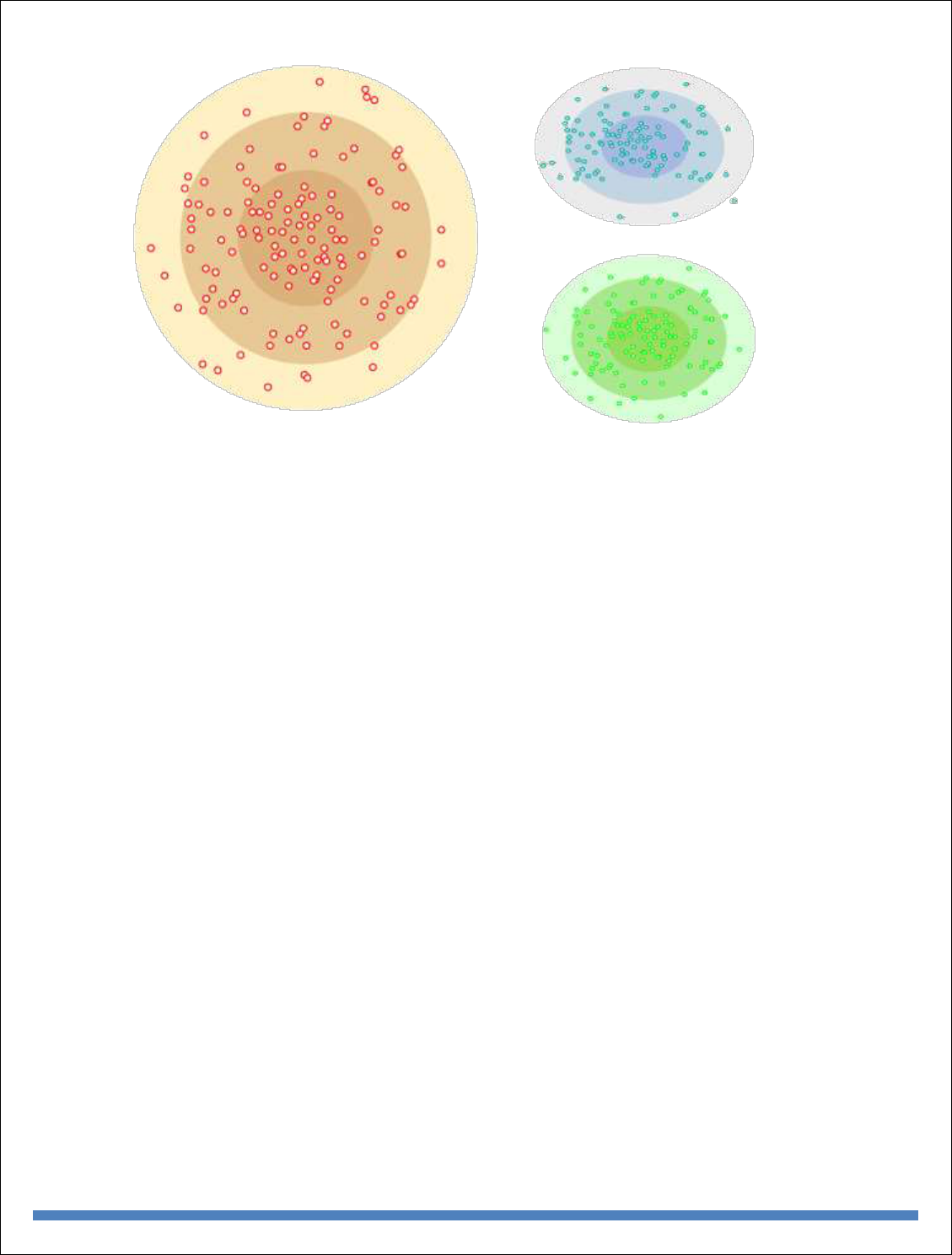

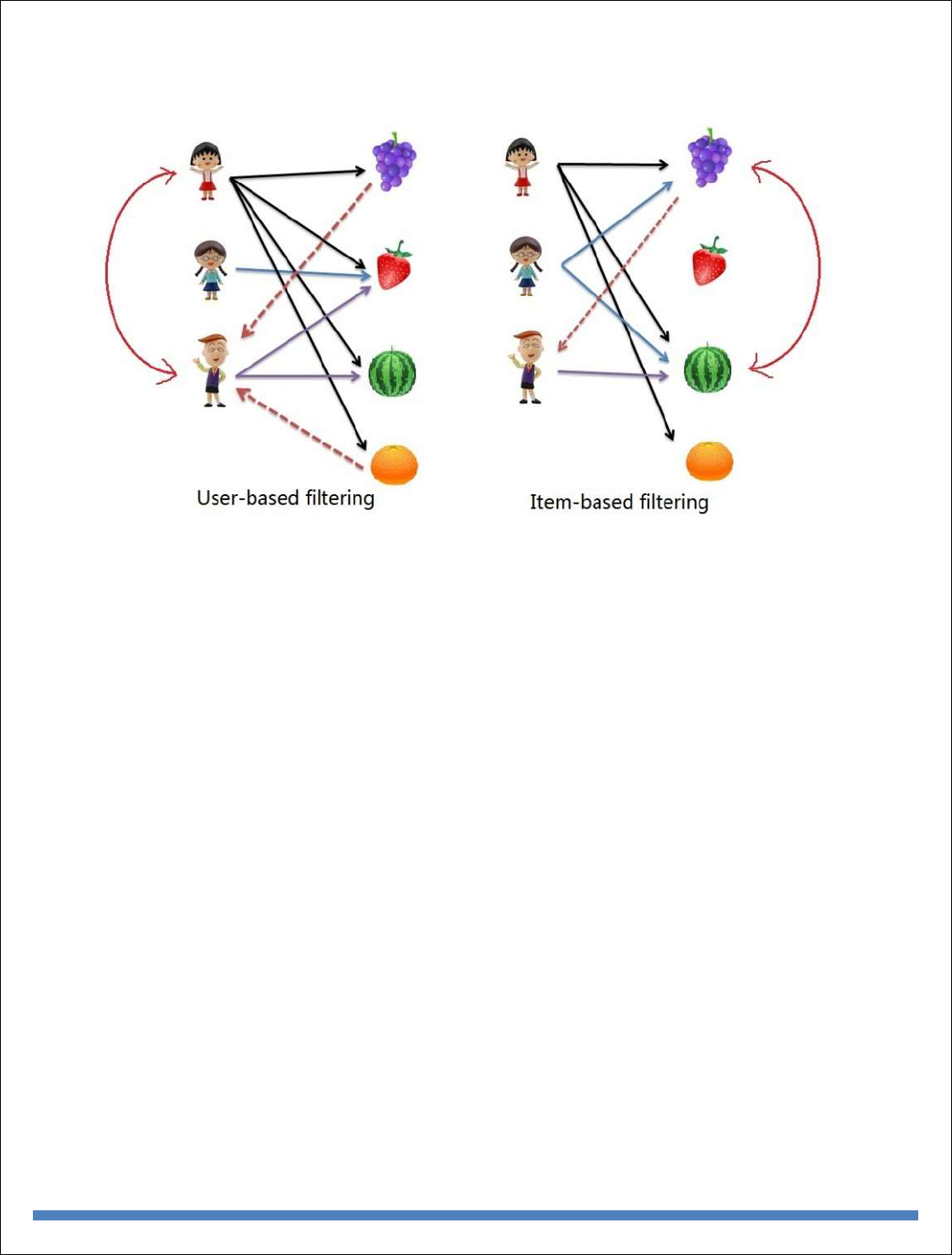

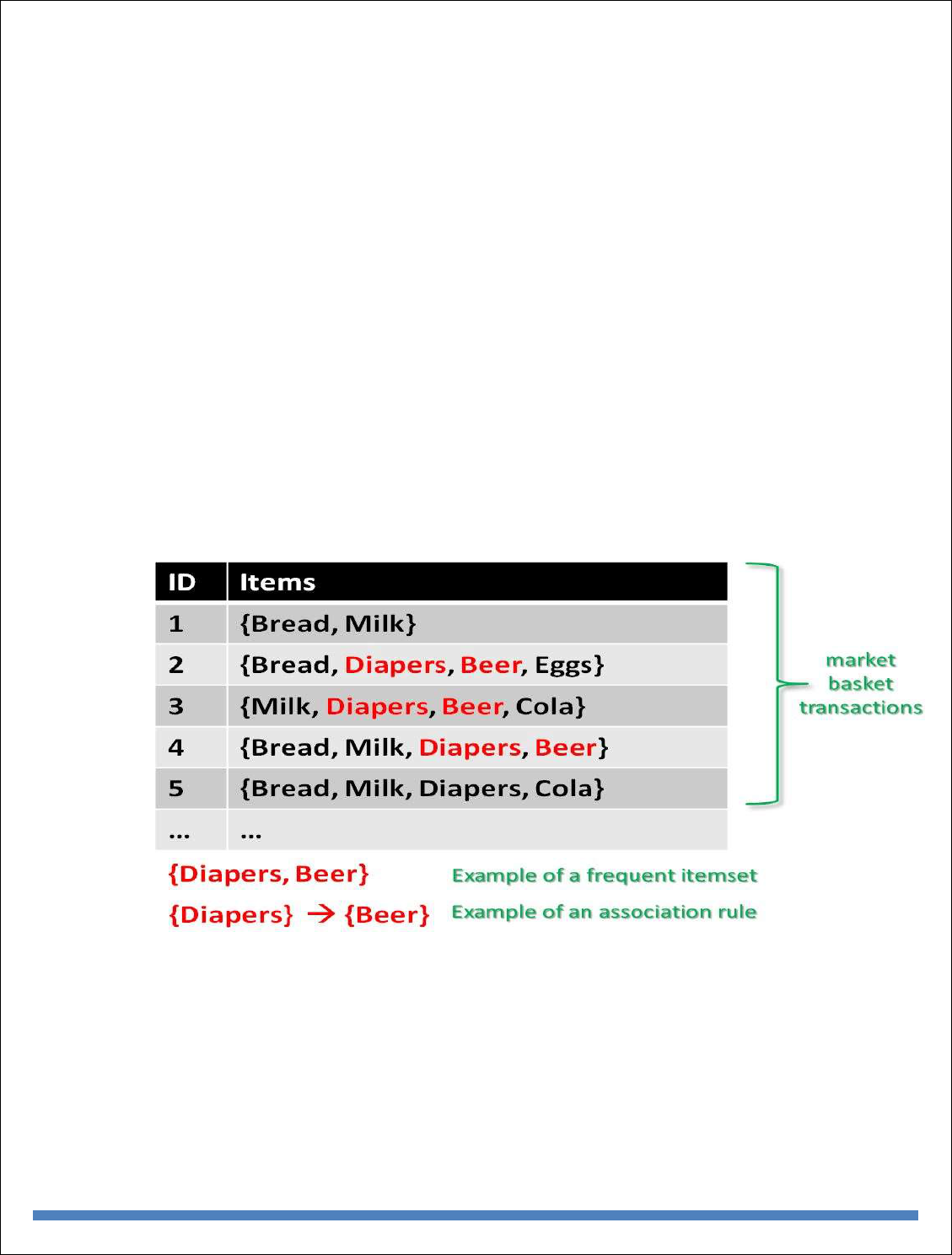

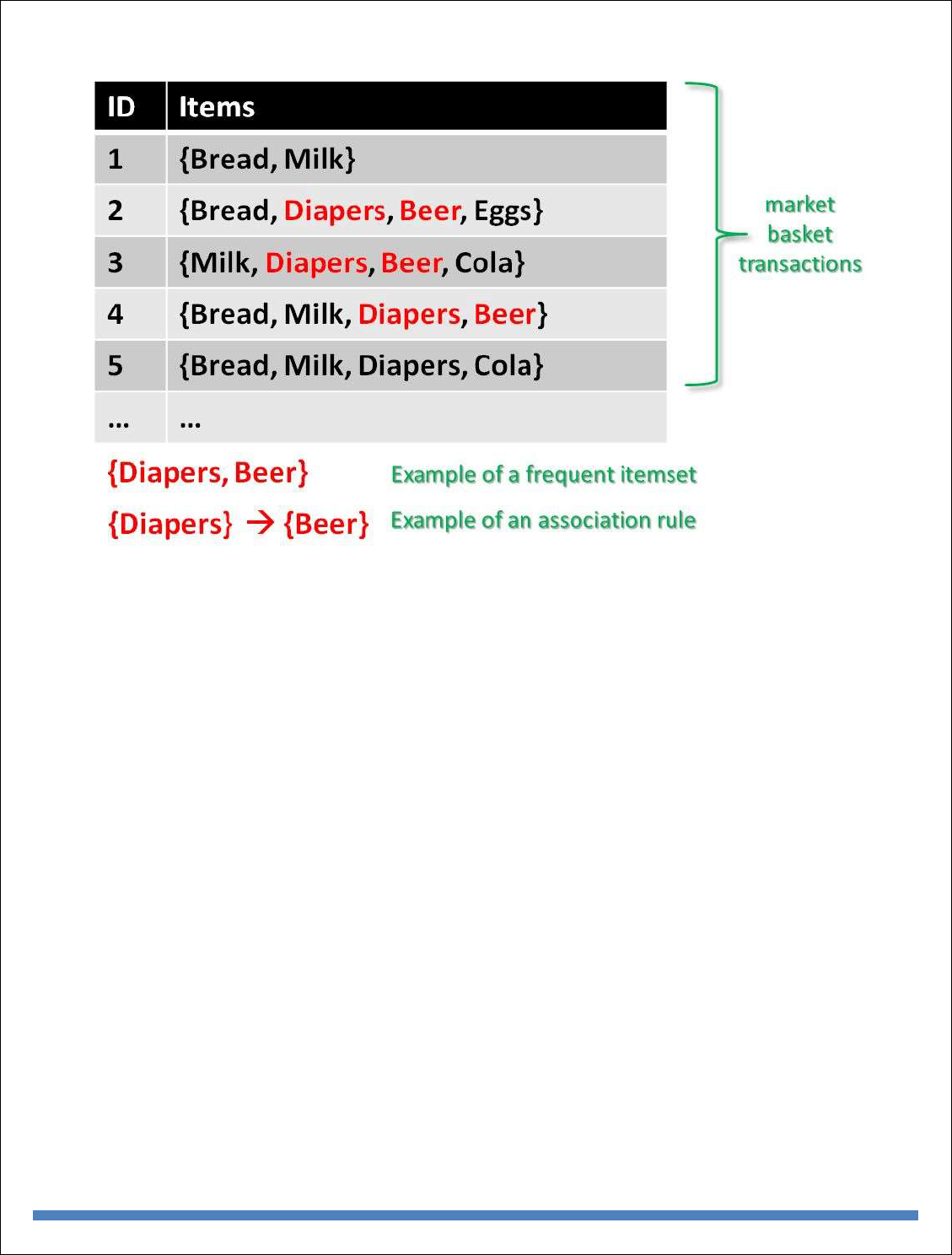

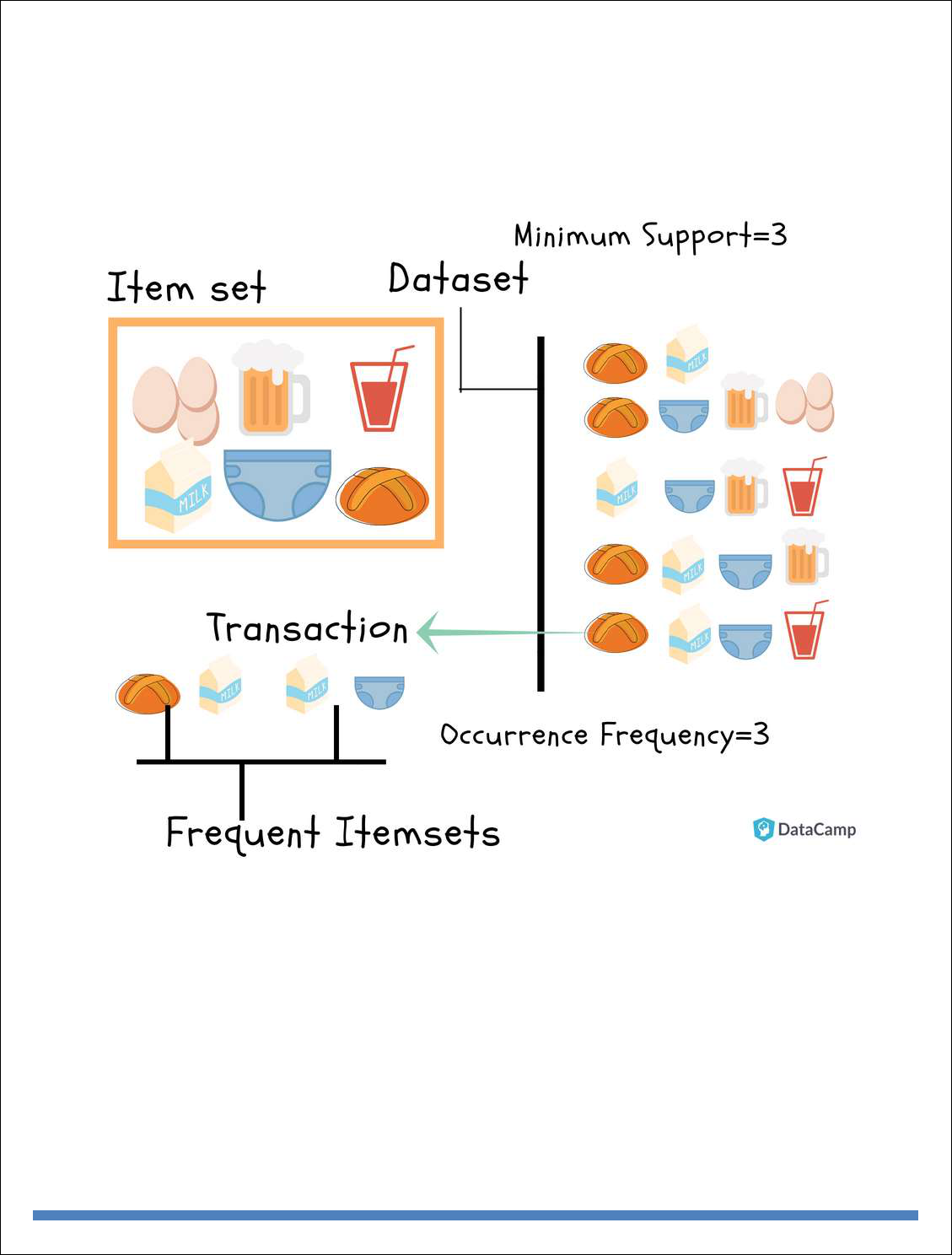

Machine Learning: Introduction, Supervised Learning, Unsupervised Learning, Machine

Learning Algorithms: Regression Model, Clustering, Collaborative Filtering, Associate Rule

Making, Decision Tree, Big Data Analytics with BigR.

Reference Book:

1. Judith Huruwitz, Alan Nugent, Fern Halper, Marcia Kaufman, “Big data for

dummies”, John Wiley & Sons, Inc.(2013)

2. Tom White, “Hadoop The Definitive Guide”, O’Reilly Publications, Fourth

Edition,2015

3. Dirk Deroos, Paul C.Zikopoulos, Roman B.Melnky, Bruce Brown, Rafael Coss,

“Hadoop For Dummies”, Wiley Publications,2014

4. Robert D.Schneider, “Hadoop For Dummies”, John Wiley & Sons, Inc.(2012)

5. Paul Zikopoulos, “Understanding Big Data: Analytics for Enterprise Class

Hadoop and Streaming Data, McGraw Hill, 2012 Chuck Lam, “Hadoop In

Action”, Dreamtech Publications, 2010

Text Book:

1. Seema Acharya, Subhashini Chellappan, “Big Data and Analytics”, Wiley

Publications, First Edition,2015

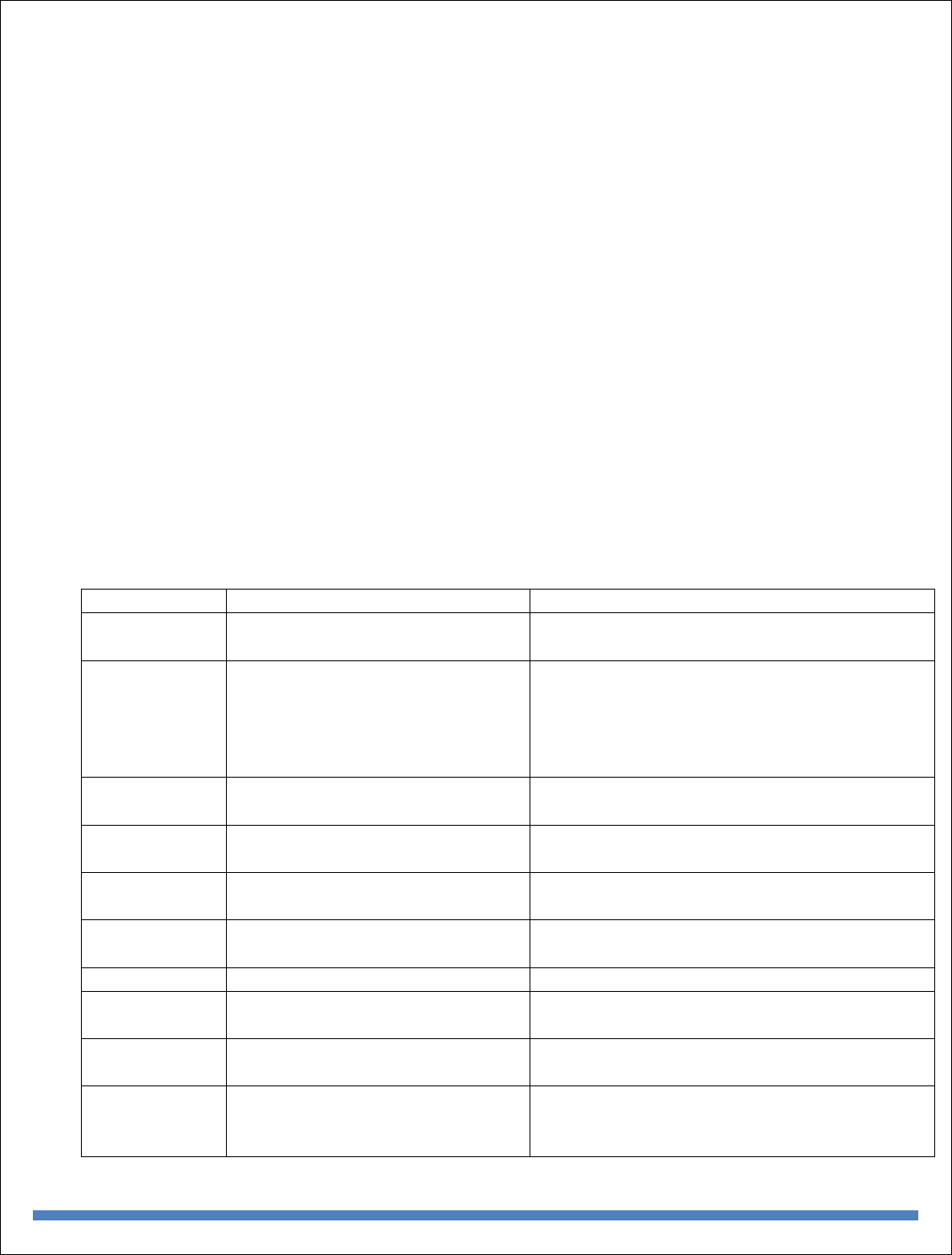

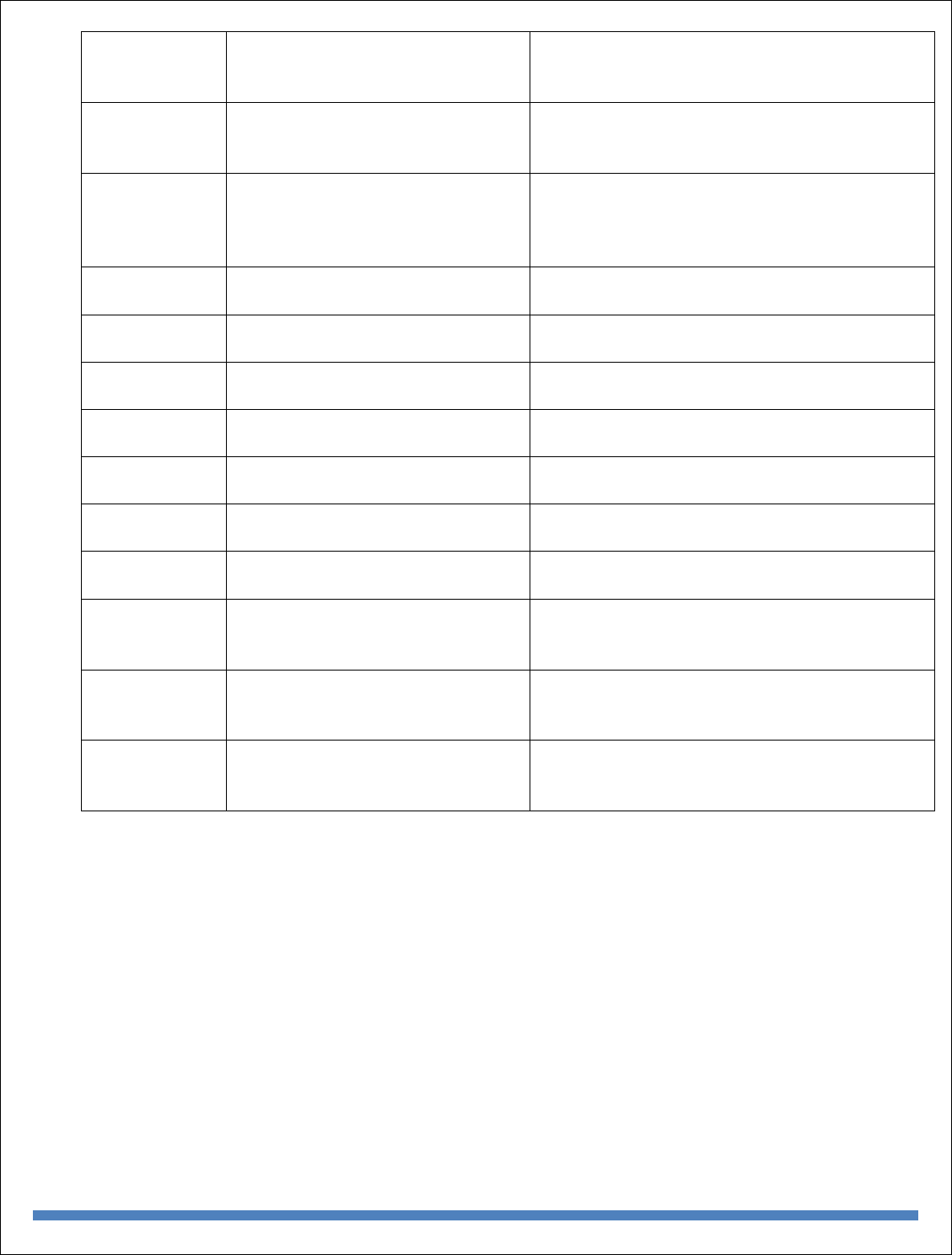

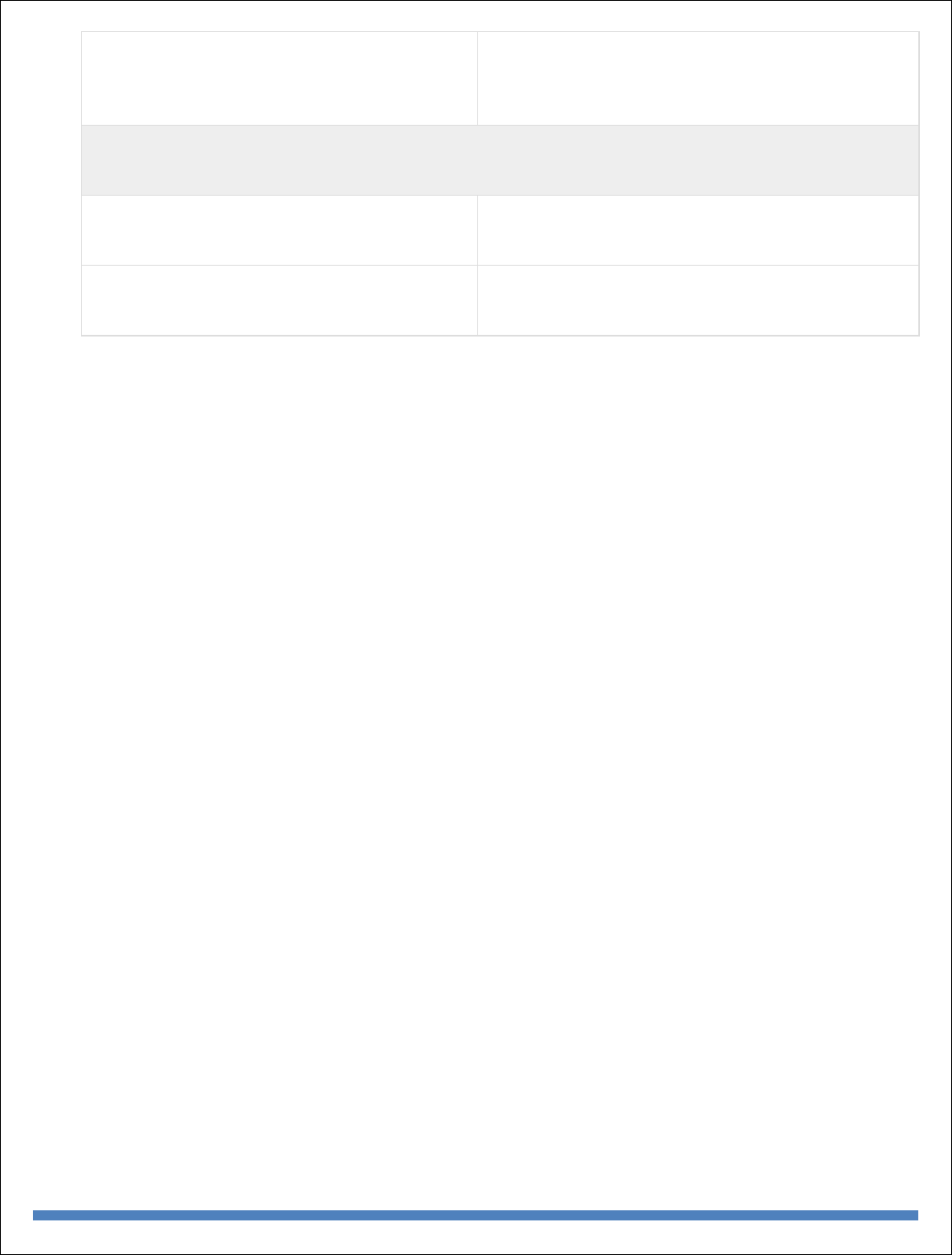

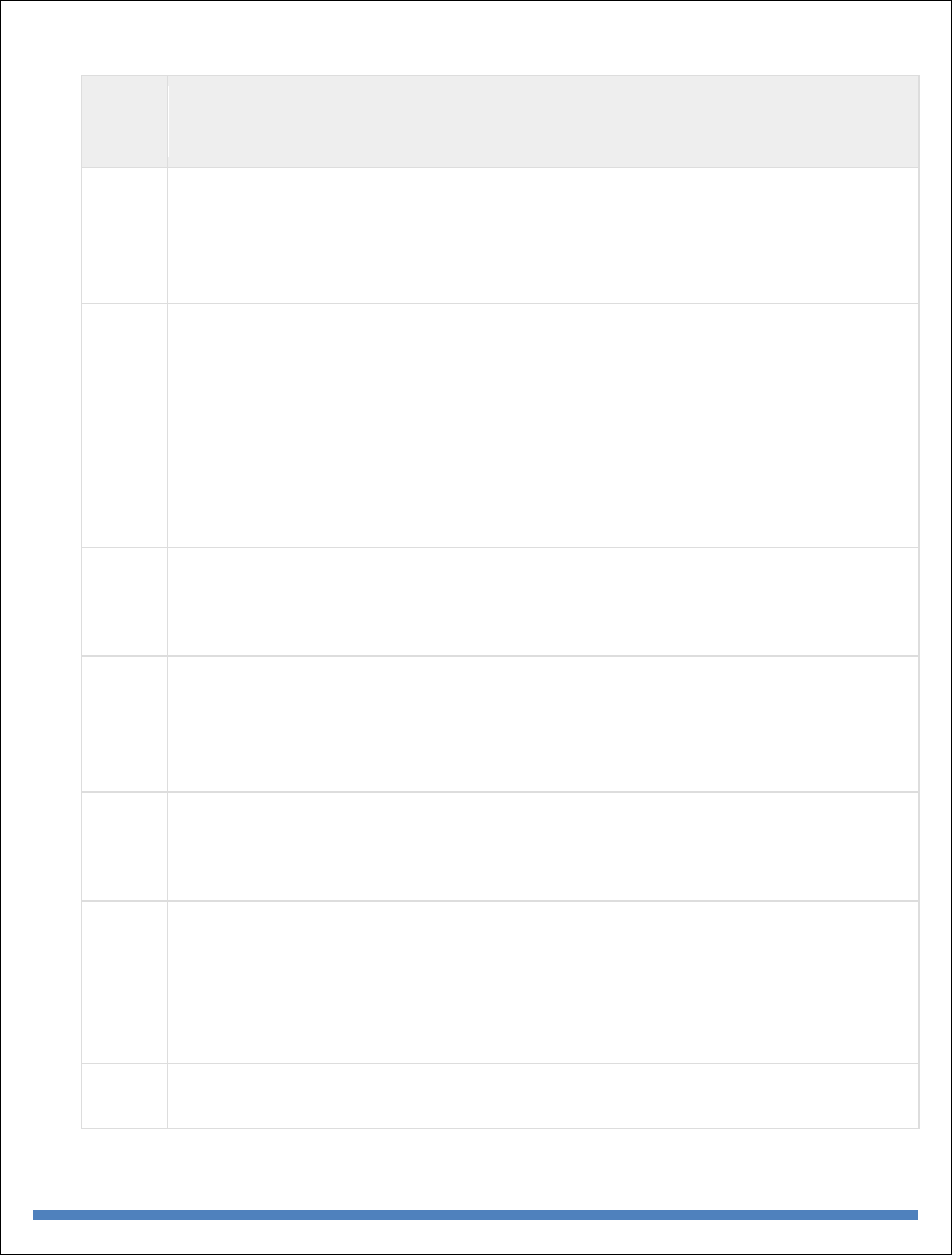

INDEX

S. No

Unit

Topic

Pg.No

1

I

INTRODUCTION TO BIG DATA AND ANALYTICS

Classification of Digital Data, Structured and Unstructured Data -

Introduction to Big Data

1

2

I

Why Big Data Traditional Business Intelligence versus Big Data - Data

Warehouse and Hadoop

4

3

I

Environment Big Data Analytics: Classification of Analytics – Challenges

- Big Data Analytics importance

5

4

I

Data Science - Data Scientist - Terminologies used in Big Data Environments

10

5

I

Basically, Available Soft State Eventual Consistency -Top Analytics Tools

12

7

II

INTRODUCTION TO TECHNOLOGY LANDSCAPE

NoSQL, Comparison of SQL and NoSQL, Hadoop -RDBMS Versus

Hadoop - Distributed Computing

15

8

II

Challenges – Hadoop Overview - Hadoop Distributed File System -

Processing Data with Hadoop -

20

9

II

Managing Resources and Applications with Hadoop YARN -

Interacting with Hadoop Ecosystem

22

111

10

III

INTRODUCTION TO MONGODB AND MAPREDUCE

PROGRAMMING

MongoDB: Why Mongo DB - Terms used in RDBMS and Mongo DB -

Data Types - MongoDB Query Language

24

1

11

III

MapReduce: Mapper – Reducer – Combiner – Partitioner – Searching –

Sorting – Compression

36

12

IV

INTRODUCTION TO HIVE AND PIG

Hive: Introduction – Architecture - Data Types - File Formats - Hive

Query Language Statements

52

13

IV

Partitions – Bucketing – Views - Sub- Query – Joins – Aggregations -

Group by and Having - RCFile

70

14

IV

Implementation – Hive User Defined Function - Serialization and

Deserialization. Pig: Introduction

75

15

IV

Anatomy – Features – Philosophy - Use Case for Pig - Pig Latin

Overview - Pig Primitive Data Types

76

16

IV

Running Pig - Execution Modes of Pig - HDFS Commands -Relational

Operators - Eval Function

79

17

IV

Complex Data Types - Piggy Bank - User-Defined Functions -

Parameter Substitution – Diagnostic

82

18

IV

Operator - Word Count Example using Pig - Pig at Yahoo! - Pig Versus

Hive

93

19

V

INTRODUCTION TO DATA ANALYTICS WITH R

Machine Learning: Introduction, Supervised Learning, Unsupervised

Learning, Machine Learning

96

20

V

Algorithms: Regression Model, Clustering, Collaborative Filtering,

Associate Rule Making, Decision Tree, Big Data Analytics with BigR.

97

BIG DATA ANALYTICS

1

UNIT – I

What is Big Data?

According to Gartner, the definition of Big Data –

“Big data” is high-volume, velocity, and variety information assets that demand cost-effective,

innovative forms of information processing for enhanced insight and decision making.”

This definition clearly answers the “What is Big Data?” question – Big Data refers to complex and

large data sets that have to be processed and analyzed to uncover valuable information that can

benefit businesses and organizations.

However, there are certain basic tenets of Big Data that will make it even simpler to answer what

is Big Data:

It refers to a massive amount of data that keeps on growing exponentially with time.

It is so voluminous that it cannot be processed or analyzed using conventional data

processing techniques.

It includes data mining, data storage, data analysis, data sharing, and data visualization.

The term is an all-comprehensive one including data, data frameworks, along with the tools

and techniques used to process and analyze the data.

The History of Big Data

Although the concept of big data itself is relatively new, the origins of large data sets go back to

the 1960s and '70s when the world of data was just getting started with the first data centers and

the development of the relational database.

Around 2005, people began to realize just how much data users generated through Facebook,

YouTube, and other online services. Hadoop (an open-source framework created specifically to

store and analyze big data sets) was developed that same year. NoSQL also began to gain

popularity during this time.

The development of open-source frameworks, such as Hadoop (and more recently, Spark) was

essential for the growth of big data because they make big data easier to work with and cheaper to

store. In the years since then, the volume of big data has skyrocketed. Users are still generating

huge amounts of data—but it’s not just humans who are doing it.

With the advent of the Internet of Things (IoT), more objects and devices are connected to the

internet, gathering data on customer usage patterns and product performance. The emergence of

machine learning has produced still more data.

While big data has come far, its usefulness is only just beginning. Cloud computing has expanded

big data possibilities even further. The cloud offers truly elastic scalability, where developers can

simply spin up ad hoc clusters to test a subset of data.

BIG DATA ANALYTICS

2

Benefits of Big Data and Data Analytics

Big data makes it possible for you to gain more complete answers because you have more

information.

More complete answers mean more confidence in the data—which means a completely

different approach to tackling problems.

Types of Big Data

Now that we are on track with what is big data, let’s have a look at the types of big data:

a) Structured

Structured is one of the types of big data and By structured data, we mean data that can be

processed, stored, and retrieved in a fixed format. It refers to highly organized information that

can be readily and seamlessly stored and accessed from a database by simple search engine

algorithms. For instance, the employee table in a company database will be structured as the

employee details, their job positions, their salaries, etc., will be present in an organized manner.

b) Unstructured

Unstructured data refers to the data that lacks any specific form or structure whatsoever. This

makes it very difficult and time-consuming to process and analyze unstructured data. Email is an

example of unstructured data. Structured and unstructured are two important types of big data.

c) Semi-structured

Semi structured is the third type of big data. Semi-structured data pertains to the data containing

both the formats mentioned above, that is, structured and unstructured data. To be precise, it refers

to the data that although has not been classified under a particular repository (database), yet

contains vital information or tags that segregate individual elements within the data. Thus we come

to the end of types of data.

Characteristics of Big Data

Back in 2001, Gartner analyst Doug Laney listed the 3 ‘V’s of Big Data – Variety, Velocity, and

Volume. Let’s discuss the characteristics of big data.

These characteristics, isolated, are enough to know what big data is. Let’s look at them in depth:

a) Variety

Variety of Big Data refers to structured, unstructured, and semi-structured data that is gathered

from multiple sources. While in the past, data could only be collected from spreadsheets and

databases, today data comes in an array of forms such as emails, PDFs, photos, videos, audios, SM

posts, and so much more. Variety is one of the important characteristics of big data.

BIG DATA ANALYTICS

3

b) Velocity

Velocity essentially refers to the speed at which data is being created in real-time. In a broader

prospect, it comprises the rate of change, linking of incoming data sets at varying speeds, and

activity bursts.

c) Volume

Volume is one of the characteristics of big data. We already know that Big Data indicates huge

‘volumes’ of data that is being generated on a daily basis from various sources like social media

platforms, business processes, machines, networks, human interactions, etc. Such a large amount

of data is stored in data warehouses. Thus comes to the end of characteristics of big data.

Why is Big Data Important?

The importance of big data does not revolve around how much data a company has but how a

company utilizes the collected data. Every company uses data in its own way; the more efficiently

a company uses its data, the more potential it has to grow. The company can take data from any

source and analyze it to find answers which will enable:

1. Cost Savings: Some tools of Big Data like Hadoop and Cloud-Based Analytics can

bring cost advantages to business when large amounts of data are to be stored and these

tools also help in identifying more efficient ways of doing business.

2. Time Reductions: The high speed of tools like Hadoop and in-memory analytics can

easily identify new sources of data which helps businesses analyzing data immediately

and make quick decisions based on the learning.

3. Understand the market conditions: By analyzing big data you can get a better

understanding of current market conditions. For example, by analyzing customers’

purchasing behaviors, a company can find out the products that are sold the most and

produce products according to this trend. By this, it can get ahead of its competitors.

4. Control online reputation: Big data tools can do sentiment analysis. Therefore, you

can get feedback about who is saying what about your company. If you want to monitor

and improve the online presence of your business, then, big data tools can help in all

this.

5. Using Big Data Analytics to Boost Customer Acquisition and Retention

The customer is the most important asset any business depends on. There is no single

business that can claim success without first having to establish a solid customer base.

However, even with a customer base, a business cannot afford to disregard the high

competition it faces. If a business is slow to learn what customers are looking for, then

it is very easy to begin offering poor quality products. In the end, loss of clientele will

result, and this creates an adverse overall effect on business success. The use of big data

allows businesses to observe various customer related patterns and trends. Observing

customer behavior is important to trigger loyalty.

6. Using Big Data Analytics to Solve Advertisers Problem and Offer Marketing

Insights

BIG DATA ANALYTICS

4

Big data analytics can help change all business operations. This includes the ability to

match customer expectation, changing company’s product line and of course ensuring

that the marketing campaigns are powerful.

7. Big Data Analytics As a Driver of Innovations and Product Development

Another huge advantage of big data is the ability to help companies innovate and

redevelop their products.

Business Intelligence vs Big Data

Although Big Data and Business Intelligence are two technologies used to analyze data to help

companies in the decision-making process, there are differences between both of them. They differ

in the way they work as much as in the type of data they analyze.

Traditional BI methodology is based on the principle of grouping all business data into a central

server. Typically, this data is analyzed in offline mode, after storing the information in an

environment called Data Warehouse. The data is structured in a conventional relational database

with an additional set of indexes and forms of access to the tables (multidimensional cubes).

A Big Data solution differs in many aspects to BI to use. These are the main differences between

Big Data and Business Intelligence:

1. In a Big Data environment, information is stored on a distributed file system, rather than

on a central server. It is a much safer and more flexible space.

2. Big Data solutions carry the processing functions to the data, rather than the data to the

functions. As the analysis is centered on the information, it´s easier to handle larger

amounts of information in a more agile way.

3. Big Data can analyze data in different formats, both structured and unstructured. The

volume of unstructured data (those not stored in a traditional database) is growing at levels

much higher than the structured data. Nevertheless, its analysis carries different challenges.

Big Data solutions solve them by allowing a global analysis of various sources of

information.

4. Data processed by Big Data solutions can be historical or come from real-time sources.

Thus, companies can make decisions that affect their business in an agile and efficient way.

5. Big Data technology uses parallel mass processing (MPP) concepts, which improves the

speed of analysis. With MPP many instructions are executed simultaneously, and since the

various jobs are divided into several parallel execution parts, at the end the overall results

are reunited and presented. This allows you to analyze large volumes of information

quickly.

BIG DATA ANALYTICS

5

Big Data vs Data Warehouse

Big Data has become the reality of doing business for organizations today. There is a boom in the

amount of structured as well as raw data that floods every organization daily. If this data is

managed well, it can lead to powerful insights and quality decision making.

Big data analytics is the process of examining large data sets containing a variety of data types to

discover some knowledge in databases, to identify interesting patterns and establish relationships

to solve problems, market trends, customer preferences, and other useful information. Companies

and businesses that implement Big Data Analytics often reap several business benefits. Companies

implement Big Data Analytics because they want to make more informed business decisions.

A data warehouse (DW) is a collection of corporate information and data derived from operational

systems and external data sources. A data warehouse is designed to support business decisions by

allowing data consolidation, analysis and reporting at different aggregate levels. Data is populated

into the Data Warehouse through the processes of extraction, transformation and loading (ETL

tools). Data analysis tools, such as business intelligence software, access the data within the

warehouse.

Hadoop Environment Big Data Analytics

Hadoop is changing the perception of handling Big Data especially the unstructured data. Let’s

know how Apache Hadoop software library, which is a framework, plays a vital role in handling

Big Data. Apache Hadoop enables surplus data to be streamlined for any distributed processing

system across clusters of computers using simple programming models. It truly is made to scale

up from single servers to a large number of machines, each and every offering local computation,

and storage space. Instead of depending on hardware to provide high-availability, the library itself

is built to detect and handle breakdowns at the application layer, so providing an extremely

available service along with a cluster of computers, as both versions might be vulnerable to

failures.

Hadoop Community Package Consists of

File system and OS level abstractions

A MapReduce engine (either MapReduce or YARN)

The Hadoop Distributed File System (HDFS)

Java ARchive (JAR) files

Scripts needed to start Hadoop

Source code, documentation and a contribution section

Activities performed on Big Data

BIG DATA ANALYTICS

6

Store – Big data need to be collected in a seamless repository, and it is not necessary to

store in a single physical database.

Process – The process becomes more tedious than traditional one in terms of cleansing,

enriching, calculating, transforming, and running algorithms.

Access – There is no business sense of it at all when the data cannot be searched, retrieved

easily, and can be virtually showcased along the business lines.

Classification of analytics

Descriptive analytics

Descriptive analytics is a statistical method that is used to search and summarize historical data in

order to identify patterns or meaning.

Data aggregation and data mining are two techniques used in descriptive analytics to discover

historical data. Data is first gathered and sorted by data aggregation in order to make the datasets

more manageable by analysts.

Data mining describes the next step of the analysis and involves a search of the data to identify

patterns and meaning. Identified patterns are analyzed to discover the specific ways that learners

interacted with the learning content and within the learning environment.

Advantages:

Quickly and easily report on the Return on Investment (ROI) by showing how performance

achieved business or target goals.

Identify gaps and performance issues early - before they become problems.

Identify specific learners who require additional support, regardless of how many students

or employees there are.

Identify successful learners in order to offer positive feedback or additional resources.

Analyze the value and impact of course design and learning resources.

Predictive analytics

Predictive Analytics is a statistical method that utilizes algorithms and machine learning to identify

trends in data and predict future behaviors

The software for predictive analytics has moved beyond the realm of statisticians and is becoming

more affordable and accessible for different markets and industries, including the field of learning

& development.

BIG DATA ANALYTICS

7

For online learning specifically, predictive analytics is often found incorporated in the Learning

Management System (LMS), but can also be purchased separately as specialized software.

For the learner, predictive forecasting could be as simple as a dashboard located on the main screen

after logging in to access a course. Analyzing data from past and current progress, visual indicators

in the dashboard could be provided to signal whether the employee was on track with training

requirements.

Advantages:

Personalize the training needs of employees by identifying their gaps, strengths, and

weaknesses; specific learning resources and training can be offered to support individual

needs.

Retain Talent by tracking and understanding employee career progression and forecasting

what skills and learning resources would best benefit their career paths. Knowing what skills

employees need also benefits the design of future training.

Support employees who may be falling behind or not reaching their potential by offering

intervention support before their performance puts them at risk.

Simplified reporting and visuals that keep everyone updated when predictive forecasting

is required.

Prescriptive analytics

Prescriptive analytics is a statistical method used to generate recommendations and make decisions

based on the computational findings of algorithmic models.

Generating automated decisions or recommendations requires specific and unique algorithmic

models and clear direction from those utilizing the analytical technique. A recommendation cannot

be generated without knowing what to look for or what problem is desired to be solved. In this

way, prescriptive analytics begins with a problem.

Example

A Training Manager uses predictive analysis to discover that most learners without a particular

skill will not complete the newly launched course. What could be done? Now prescriptive analytics

can be of assistance on the matter and help determine options for action. Perhaps an algorithm can

detect the learners who require that new course, but lack that particular skill, and send an automated

recommendation that they take an additional training resource to acquire the missing skill.

BIG DATA ANALYTICS

8

The accuracy of a generated decision or recommendation, however, is only as good as the quality

of data and the algorithmic models developed. What may work for one company’s training needs

may not make sense when put into practice in another company’s training department. Models are

generally recommended to be tailored for each unique situation and need.

Descriptive vs Predictive vs Prescriptive Analytics

Descriptive Analytics is focused solely on historical data.

You can think of Predictive Analytics as then using this historical data to develop statistical models

that will then forecast about future possibilities.

Prescriptive Analytics takes Predictive Analytics a step further and takes the possible forecasted

outcomes and predicts consequences for these outcomes.

What Big Data Analytics Challenges

1. Need For Synchronization Across Disparate Data Sources

As data sets are becoming bigger and more diverse, there is a big challenge to incorporate them

into an analytical platform. If this is overlooked, it will create gaps and lead to wrong messages

and insights.

2. Acute Shortage Of Professionals Who Understand Big Data Analysis

The analysis of data is important to make this voluminous amount of data being produced in every

minute, useful. With the exponential rise of data, a huge demand for big data scientists and Big

Data analysts has been created in the market. It is important for business organizations to hire a

data scientist having skills that are varied as the job of a data scientist is multidisciplinary. Another

major challenge faced by businesses is the shortage of professionals who understand Big Data

analysis. There is a sharp shortage of data scientists in comparison to the massive amount of data

being produced.

3. Getting Meaningful Insights Through The Use Of Big Data Analytics

It is imperative for business organizations to gain important insights from Big Data analytics, and

also it is important that only the relevant department has access to this information. A big challenge

faced by the companies in the Big Data analytics is mending this wide gap in an effective manner.

BIG DATA ANALYTICS

9

4. Getting Voluminous Data Into The Big Data Platform

It is hardly surprising that data is growing with every passing day. This simply indicates that

business organizations need to handle a large amount of data on daily basis. The amount and

variety of data available these days can overwhelm any data engineer and that is why it is

considered vital to make data accessibility easy and convenient for brand owners and managers.

5. Uncertainty Of Data Management Landscape

With the rise of Big Data, new technologies and companies are being developed every day.

However, a big challenge faced by the companies in the Big Data analytics is to find out which

technology will be best suited to them without the introduction of new problems and potential

risks.

6. Data Storage And Quality

Business organizations are growing at a rapid pace. With the tremendous growth of the companies

and large business organizations, increases the amount of data produced. The storage of this

massive amount of data is becoming a real challenge for everyone. Popular data storage options

like data lakes/ warehouses are commonly used to gather and store large quantities of unstructured

and structured data in its native format. The real problem arises when a data lakes/ warehouse try

to combine unstructured and inconsistent data from diverse sources, it encounters errors. Missing

data, inconsistent data, logic conflicts, and duplicates data all result in data quality challenges.

7. Security And Privacy Of Data

Once business enterprises discover how to use Big Data, it brings them a wide range of possibilities

and opportunities. However, it also involves the potential risks associated with big data when it

comes to the privacy and the security of the data. The Big Data tools used for analysis and storage

utilizes the data disparate sources. This eventually leads to a high risk of exposure of the data,

making it vulnerable. Thus, the rise of voluminous amount of data increases privacy and security

concerns.

Terminologies Used In Big Data Environments

As-a-service infrastructure

Data-as-a-service, software-as-a-service, platform-as-a-service – all refer to the idea that rather

than selling data, licences to use data, or platforms for running Big Data technology, it can be

provided “as a service”, rather than as a product. This reduces the upfront capital investment

BIG DATA ANALYTICS

10

necessary for customers to begin putting their data, or platforms, to work for them, as the provider

bears all of the costs of setting up and hosting the infrastructure. As a customer, as-a-service

infrastructure can greatly reduce the initial cost and setup time of getting Big Data initiatives up

and running.

Data science

Data science is the professional field that deals with turning data into value such as new insights

or predictive models. It brings together expertise from fields including statistics, mathematics,

computer science, communication as well as domain expertise such as business knowledge. Data

scientist has recently been voted the No 1 job in the U.S., based on current demand and salary and

career opportunities.

Data mining

Data mining is the process of discovering insights from data. In terms of Big Data, because it is so

large, this is generally done by computational methods in an automated way using methods such

as decision trees, clustering analysis and, most recently, machine learning. This can be thought of

as using the brute mathematical power of computers to spot patterns in data which would not be

visible to the human eye due to the complexity of the dataset.

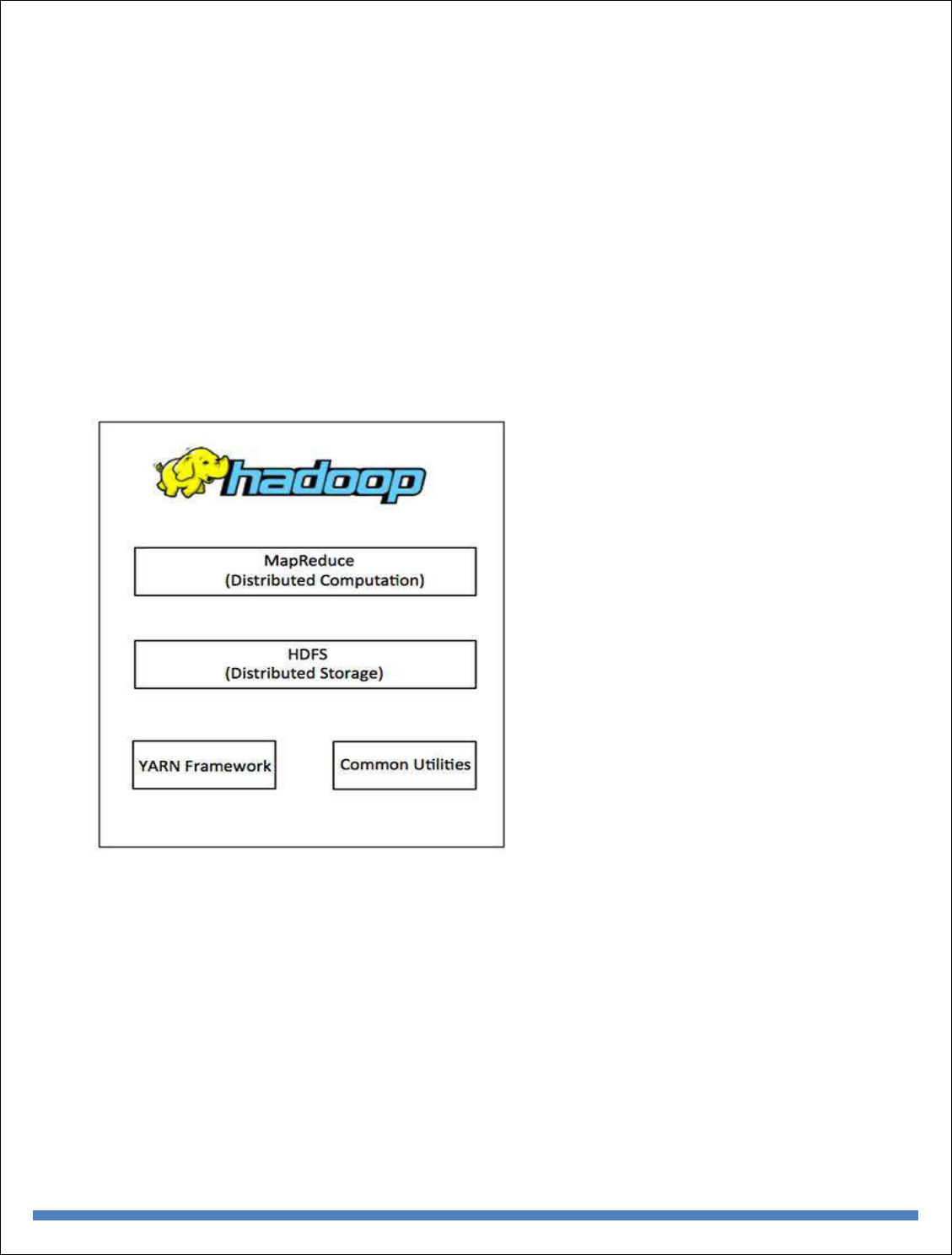

Hadoop

Hadoop is a framework for Big Data computing which has been released into the public domain

as open source software, and so can freely be used by anyone. It consists of a number of modules

all tailored for a different vital step of the Big Data process – from file storage (Hadoop File System

– HDFS) to database (HBase) to carrying out data operations (Hadoop MapReduce – see below).

It has become so popular due to its power and flexibility that it has developed its own industry of

retailers (selling tailored versions), support service providers and consultants.

Predictive modelling

At its simplest, this is predicting what will happen next based on data about what has happened

previously. In the Big Data age, because there is more data around than ever before, predictions

are becoming more and more accurate. Predictive modelling is a core component of most Big Data

initiatives, which are formulated to help us choose the course of action which will lead to the most

desirable outcome. The speed of modern computers and the volume of data available means that

predictions can be made based on a huge number of variables, allowing an ever-increasing number

of variables to be assessed for the probability that it will lead to success.

MapReduce

MapReduce is a computing procedure for working with large datasets, which was devised due to

difficulty of reading and analysing really Big Data using conventional computing methodologies.

As its name suggest, it consists of two procedures – mapping (sorting information into the format

needed for analysis – i.e. sorting a list of people according to their age) and reducing (performing

an operation, such checking the age of everyone in the dataset to see who is over 21).

BIG DATA ANALYTICS

11

NoSQL

NoSQL refers to a database format designed to hold more than data which is simply arranged into

tables, rows, and columns, as is the case in a conventional relational database. This database format

has proven very popular in Big Data applications because Big Data is often messy, unstructured

and does not easily fit into traditional database frameworks.

Python

Python is a programming language which has become very popular in the Big Data space due to

its ability to work very well with large, unstructured datasets (see Part II for the difference between

structured and unstructured data). It is considered to be easier to learn for a data science beginner

than other languages such as R (see also Part II) and more flexible.

R Programming

R is another programming language commonly used in Big Data, and can be thought of as more

specialised than Python, being geared towards statistics. Its strength lies in its powerful handling

of structured data. Like Python, it has an active community of users who are constantly expanding

and adding to its capabilities by creating new libraries and extensions.

Recommendation engine

A recommendation engine is basically an algorithm, or collection of algorithms, designed to match

an entity (for example, a customer) with something they are looking for. Recommendation engines

used by the likes of Netflix or Amazon heavily rely on Big Data technology to gain an overview

of their customers and, using predictive modelling, match them with products to buy or content to

consume. The economic incentives offered by recommendation engines has been a driving force

behind a lot of commercial Big Data initiatives and developments over the last decade.

Real-time

Real-time means “as it happens” and in Big Data refers to a system or process which is able to

give data-driven insights based on what is happening at the present moment. Recent years have

seen a large push for the development of systems capable of processing and offering insights in

real-time (or near-real-time), and advances in computing power as well as development of

techniques such as machine learning have made it a reality in many applications today.

Reporting

The crucial “last step” of many Big Data initiative involves getting the right information to the

people who need it to make decisions, at the right time. When this step is automated, analytics is

applied to the insights themselves to ensure that they are communicated in a way that they will be

understood and easy to act on. This will usually involve creating multiple reports based on the

same data or insights but each intended for a different audience (for example, in-depth technical

analysis for engineers, and an overview of the impact on the bottom line for c-level executives).

Spark

BIG DATA ANALYTICS

12

Spark is another open source framework like Hadoop but more recently developed and more suited

to handling cutting-edge Big Data tasks involving real time analytics and machine learning. Unlike

Hadoop it does not include its own filesystem, though it is designed to work with Hadoop’s HDFS

or a number of other options. However, for certain data related processes it is able to calculate at

over 100 times the speed of Hadoop, thanks to its in-memory processing capability. This means it

is becoming an increasingly popular choice for projects involving deep learning, neural networks

and other compute-intensive tasks.

Structured Data

Structured data is simply data that can be arranged neatly into charts and tables consisting of rows,

columns or multi-dimensioned matrixes. This is traditionally the way that computers have stored

data, and information in this format can easily and simply be processed and mined for insights.

Data gathered from machines is often a good example of structured data, where various data points

– speed, temperature, rate of failure, RPM etc. – can be neatly recorded and tabulated for analysis.

Unstructured Data

Unstructured data is any data which cannot easily be put into conventional charts and tables. This

can include video data, pictures, recorded sounds, text written in human languages and a great deal

more. This data has traditionally been far harder to draw insight from using computers which were

generally designed to read and analyze structured information. However, since it has become

apparent that a huge amount of value can be locked away in this unstructured data, great efforts

have been made to create applications which are capable of understanding unstructured data – for

example visual recognition and natural language processing.

Visualization

Humans find it very hard to understand and draw insights from large amounts of text or numerical

data – we can do it, but it takes time, and our concentration and attention is limited. For this reason

effort has been made to develop computer applications capable of rendering information in a visual

form – charts and graphics which highlight the most important insights which have resulted from

our Big Data projects. A subfield of reporting (see above), visualizing is now often an automated

process, with visualizations customized by algorithm to be understandable to the people who need

to act or take decisions based on them.

Basic availability, Soft state and Eventual consistency

Basic availability implies continuous system availability despite network failures and tolerance

to temporary inconsistency.

Soft state refers to state change without input which is required for eventual consistency.

BIG DATA ANALYTICS

13

Eventual consistency means that if no further updates are made to a given updated database item

for long enough period of time , all users will see the same value for the updated item.

Top Analytics Tools

* R is a language for statistical computing and graphics. It also used for big data analysis. It

provides a wide variety of statistical tests.

Features:

Effective data handling and storage facility,

It provides a suite of operators for calculations on arrays, in particular, matrices,

It provides coherent, integrated collection of big data tools for data analysis

It provides graphical facilities for data analysis which display either on-screen or on

hardcopy

* Apache Spark is a powerful open source big data analytics tool. It offers over 80 high-level

operators that make it easy to build parallel apps. It is used at a wide range of organizations to

process large datasets.

Features:

It helps to run an application in Hadoop cluster, up to 100 times faster in memory, and ten

times faster on disk

It offers lighting Fast Processing

Support for Sophisticated Analytics

Ability to Integrate with Hadoop and Existing Hadoop Data

* Plotly is an analytics tool that lets users create charts and dashboards to share online.

Features:

Easily turn any data into eye-catching and informative graphics

It provides audited industries with fine-grained information on data provenance

Plotly offers unlimited public file hosting through its free community plan

* Lumify is a big data fusion, analysis, and visualization platform. It helps users to discover

connections and explore relationships in their data via a suite of analytic options.

Features:

It provides both 2D and 3D graph visualizations with a variety of automatic layouts

BIG DATA ANALYTICS

14

It provides a variety of options for analyzing the links between entities on the graph

It comes with specific ingest processing and interface elements for textual content, images,

and videos

It spaces feature allows you to organize work into a set of projects, or workspaces

It is built on proven, scalable big data technologies

* IBM SPSS Modeler is a predictive big data analytics platform. It offers predictive models and

delivers to individuals, groups, systems and the enterprise. It has a range of advanced algorithms

and analysis techniques.

Features:

Discover insights and solve problems faster by analyzing structured and unstructured data

Use an intuitive interface for everyone to learn

You can select from on-premises, cloud and hybrid deployment options

Quickly choose the best performing algorithm based on model performance

* MongoDB is a NoSQL, document-oriented database written in C, C++, and JavaScript. It is free

to use and is an open source tool that supports multiple operating systems including Windows

Vista ( and later versions), OS X (10.7 and later versions), Linux, Solaris, and FreeBSD.

Its main features include Aggregation, Adhoc-queries, Uses BSON format, Sharding, Indexing,

Replication, Server-side execution of javascript, Schemaless, Capped collection, MongoDB

management service (MMS), load balancing and file storage.

Features:

Easy to learn.

Provides support for multiple technologies and platforms.

No hiccups in installation and maintenance.

Reliable and low cost.

BIG DATA ANALYTICS

15

UNIT II

NoSQL

NoSQL is a non-relational DMS, that does not require a fixed schema, avoids joins, and is easy to

scale. NoSQL database is used for distributed data stores with humongous data storage needs.

NoSQL is used for Big data and real-time web apps. For example companies like Twitter,

Facebook, Google that collect terabytes of user data every single day.

SQL

Structured Query language (SQL) pronounced as "S-Q-L" or sometimes as "See-Quel" is the

standard language for dealing with Relational Databases. A relational database defines

relationships in the form of tables.

SQL programming can be effectively used to insert, search, update, delete database records.

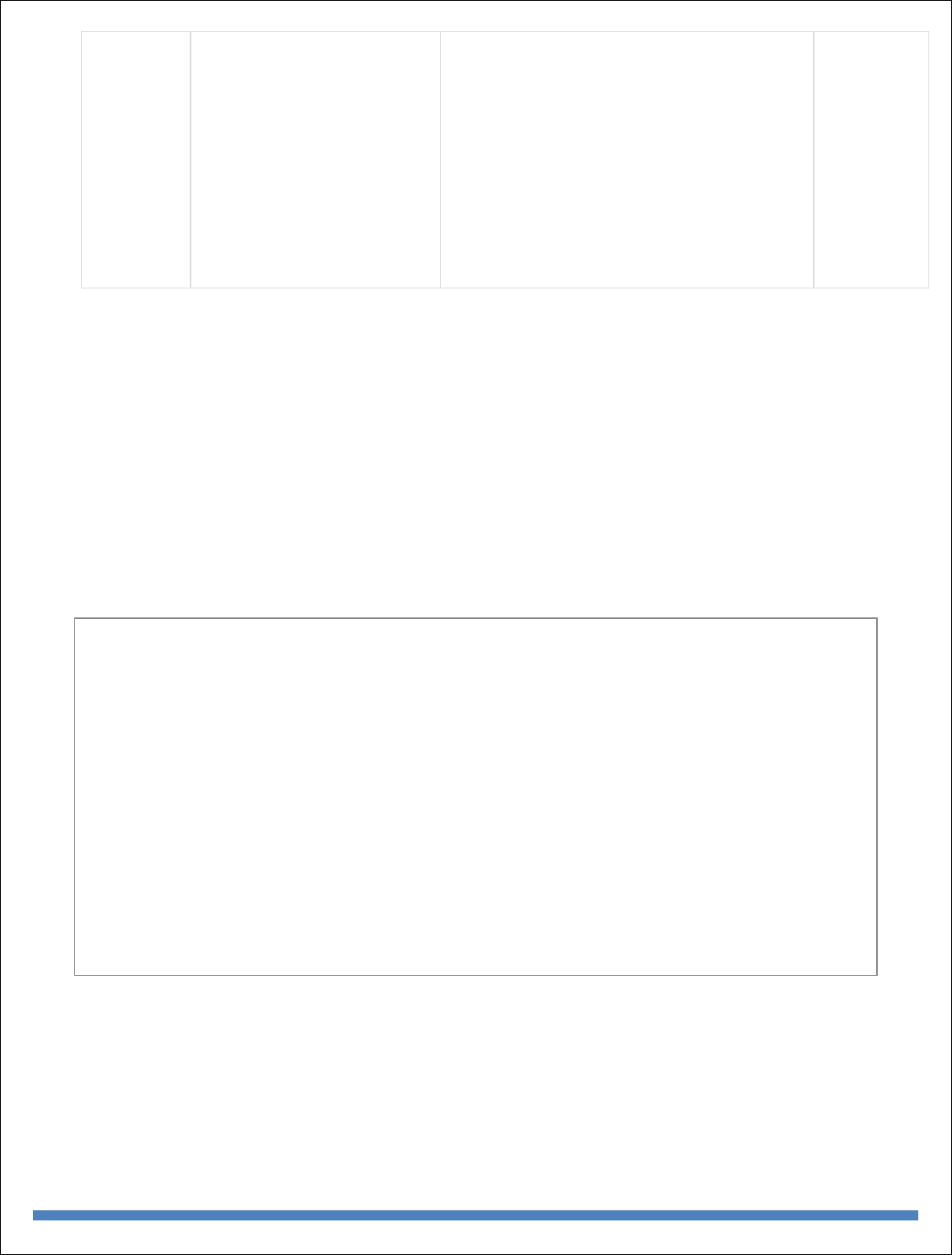

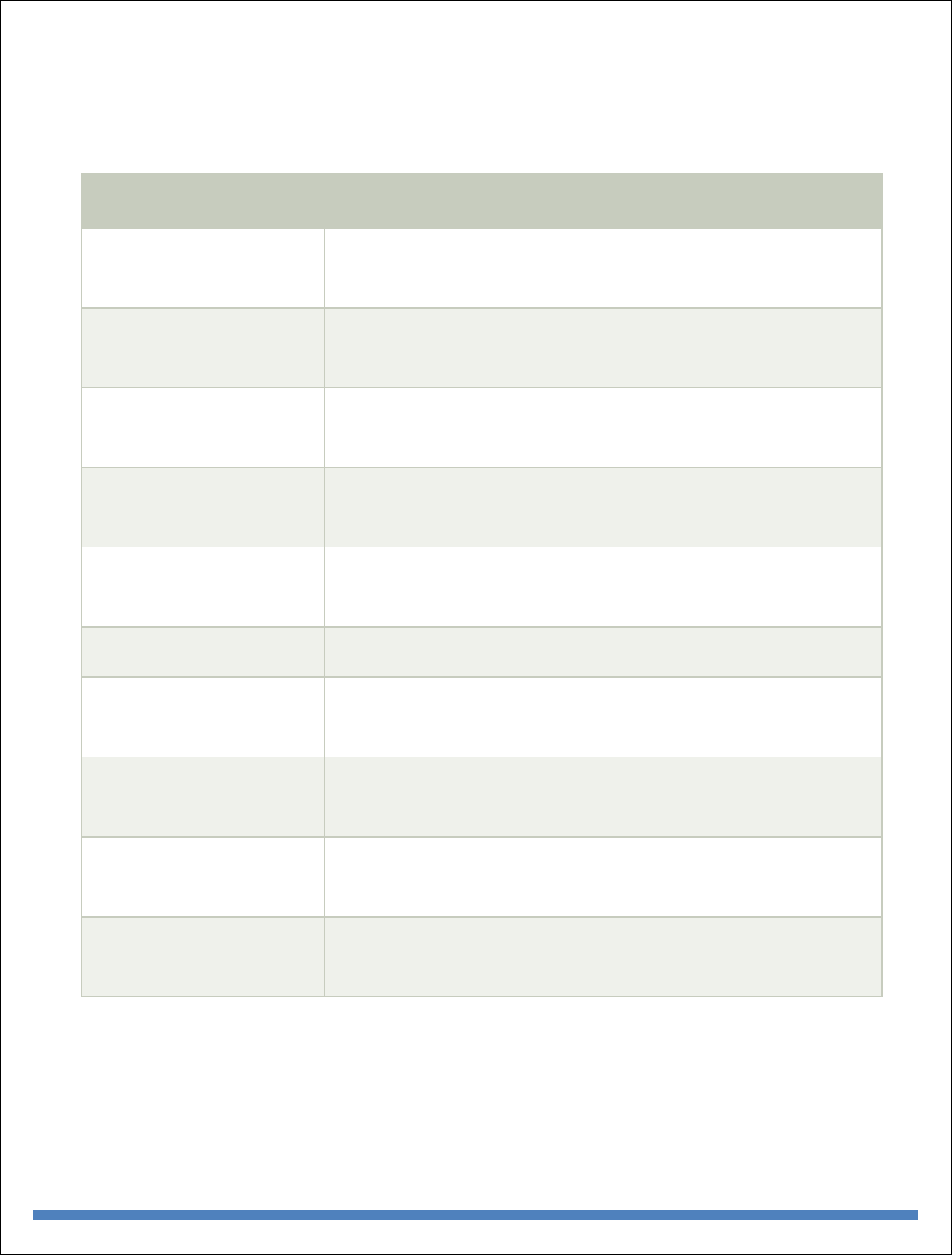

Comparison of SQL and NoSQL

Parameter

SQL

NOSQL

Definition

SQL databases are primarily called

RDBMS or Relational Databases

NoSQL databases are primarily called as Non-

relational or distributed database

Design for

Traditional RDBMS uses SQL

syntax and queries to analyze and

get the data for further insights.

They are used for OLAP systems.

NoSQL database system consists of various

kind of database technologies. These databases

were developed in response to the demands

presented for the development of the modern

application.

Query

Language

Structured query language (SQL)

No declarative query language

Type

SQL databases are table based

databases

NoSQL databases can be document based, key-

value pairs, graph databases

Schema

SQL databases have a predefined

schema

NoSQL databases use dynamic schema for

unstructured data.

Ability to scale

SQL databases are vertically

scalable

NoSQL databases are horizontally scalable

Examples

Oracle, Postgres, and MS-SQL.

MongoDB, Redis, , Neo4j, Cassandra, Hbase.

Best suited for

An ideal choice for the complex

query intensive environment.

It is not good fit complex queries.

Hierarchical

data storage

SQL databases are not suitable for

hierarchical data storage.

More suitable for the hierarchical data store as it

supports key-value pair method.

Variations

One type with minor variations.

Many different types which include key-value

stores, document databases, and graph

databases.

BIG DATA ANALYTICS

16

Development

Year

It was developed in the 1970s to

deal with issues with flat file

storage

Developed in the late 2000s to overcome issues

and limitations of SQL databases.

Open-source

A mix of open-source like Postgres

& MySQL, and commercial like

Oracle Database.

Open-source

Consistency

It should be configured for strong

consistency.

It depends on DBMS as some offers strong

consistency like MongoDB, whereas others

offer only offers eventual consistency, like

Cassandra.

Best Used for

RDBMS database is the right

option for solving ACID problems.

NoSQL is a best used for solving data

availability problems

Importance

It should be used when data validity

is super important

Use when it's more important to have fast data

than correct data

Best option

When you need to support dynamic

queries

Use when you need to scale based on changing

requirements

Hardware

Specialized DB hardware (Oracle

Exadata, etc.)

Commodity hardware

Network

Highly available network

(Infiniband, Fabric Path, etc.)

Commodity network (Ethernet, etc.)

Storage Type

Highly Available Storage (SAN,

RAID, etc.)

Commodity drives storage (standard HDDs,

JBOD)

Best features

Cross-platform support, Secure and

free

Easy to use, High performance, and Flexible

tool.

Top

Companies

Using

Hootsuite, CircleCI, Gauges

Airbnb, Uber, Kickstarter

Average salary

The average salary for any

professional SQL Developer is

$84,328 per year in the U.S.A.

The average salary for "NoSQL developer"

ranges from approximately $72,174 per year

ACID vs.

BASE Model

ACID( Atomicity, Consistency,

Isolation, and Durability) is a

standard for RDBMS

Base ( Basically Available, Soft state,

Eventually Consistent) is a model of many

NoSQL systems

BIG DATA ANALYTICS

17

RDBMS Versus Hadoop

Distributed Computing Challenges

Designing a distributed system does not come as easy and straight forward. A number

of challenges need to be overcome in order to get the ideal system. The major challenges in

distributed systems are listed below:

1. Heterogeneity:

The Internet enables users to access services and run applications over a heterogeneous collection

of computers and networks. Heterogeneity (that is, variety and difference) applies to all of the

following:

BIG DATA ANALYTICS

18

o Hardware devices: computers, tablets, mobile phones, embedded devices, etc.

o Operating System: Ms Windows, Linux, Mac, Unix, etc.

o Network: Local network, the Internet, wireless network, satellite links, etc.

o Programming languages: Java, C/C++, Python, PHP, etc.

o Different roles of software developers, designers, system managers

Different programming languages use different representations for characters and data structures

such as arrays and records. These differences must be addressed if programs written in different

languages are to be able to communicate with one another. Programs written by different

developers cannot communicate with one another unless they use common standards, for example,

for network communication and the

representation of primitive data items and data structures in messages. For this to happen, standards

need to be agreed and adopted – as have the Internet protocols.

Middleware: The term middleware applies to a software layer that provides a programming

abstraction as well as masking the heterogeneity of the underlying networks, hardware, operating

systems and programming languages. Most middleware is implemented over the Internet

protocols, which themselves mask the differences of the underlying networks, but all middleware

deals with the differences in operating systems

and hardware

Heterogeneity and mobile code: The term mobile code is used to refer to program code that can

be transferred from one computer to another and run at the destination – Java applets are an

example. Code suitable for running on one computer is not necessarily suitable for running on

another because executable programs are normally specific both to the instruction set and to the

host operating system.

2. Transparency:

Transparency is defined as the concealment from the user and the application programmer of the

separation of components in a distributed system, so that the system is perceived as a whole rather

than as a collection of independent components. In other words, distributed systems designers must

hide the complexity of the systems as much as they can. Some terms of transparency in distributed

systems are:

Access Hide differences in data representation and how a resource is accessed

Location Hide where a resource is located

Migration Hide that a resource may move to another location

Relocation Hide that a resource may be moved to another location while in use

Replication Hide that a resource may be copied in several places

Concurrency Hide that a resource may be shared by several competitive users

Failure Hide the failure and recovery of a resource

Persistence Hide whether a (software) resource is in memory or a disk

3. Openness

The openness of a computer system is the characteristic that determines whether the system can

be extended and re-implemented in various ways. The openness of distributed systems is

determined primarily by the degree to which new resource-sharing services can be added and be

BIG DATA ANALYTICS

19

made available for use by a variety of client programs. If the well-defined interfaces for a system

are published, it is easier for developers to add new features or replace sub-systems in the future.

Example: Twitter and Facebook have API that allows developers to develop their own software

interactively.

4. Concurrency

Both services and applications provide resources that can be shared by clients in a distributed

system. There is therefore a possibility that several clients will attempt to access a shared resource

at the same time. For example, a data structure that records bids for an auction may be accessed

very frequently when it gets close to the deadline time. For an object to be safe in a concurrent

environment, its operations must be synchronized in such a way that its data remains consistent.

This can be achieved by standard techniques such as semaphores, which are used in most operating

systems.

5. Security

Many of the information resources that are made available and maintained in distributed systems

have a high intrinsic value to their users. Their security is therefore of considerable importance.

Security for information resources has three components:

confidentiality (protection against disclosure to unauthorized individuals)

integrity (protection against alteration or corruption),

availability for the authorized (protection against interference with the means to access the

resources).

6. Scalability

Distributed systems must be scalable as the number of user increases. The scalability is defined by

B. Clifford Neuman as

A system is said to be scalable if it can handle the addition of users and resources without suffering

a noticeable loss of performance or increase in administrative complexity

Scalability has 3 dimensions:

o Size

o Number of users and resources to be processed. Problem associated is overloading

o Geography

o Distance between users and resources. Problem associated is communication reliability

o Administration

o As the size of distributed systems increases, many of the system needs to be controlled.

Problem associated is administrative mess

7. Failure Handling

Computer systems sometimes fail. When faults occur in hardware or software, programs may

produce incorrect results or may stop before they have completed the intended computation. The

handling of failures is particularly difficult.

BIG DATA ANALYTICS

20

Hadoop Overview

Hadoop is an Apache open source framework written in java that allows distributed processing

of large datasets across clusters of computers using simple programming models. The Hadoop

framework application works in an environment that provides

distributed storage and computation across clusters of computers. Hadoop is designed to scale up

from single server to thousands of machines, each offering local computation and storage.

Hadoop Architecture

At its core, Hadoop has two major layers namely −

Processing/Computation layer (MapReduce), and

Storage layer (Hadoop Distributed File System).

MapReduce

MapReduce is a parallel programming model for writing distributed applications devised at

Google for efficient processing of large amounts of data (multi-terabyte data-sets), on large

clusters (thousands of nodes) of commodity hardware in a reliable, fault-tolerant manner. The

MapReduce program runs on Hadoop which is an Apache open-source framework.

Hadoop Distributed File System

The Hadoop Distributed File System (HDFS) is based on the Google File System (GFS) and

provides a distributed file system that is designed to run on commodity hardware. It has many

BIG DATA ANALYTICS

21

similarities with existing distributed file systems. However, the differences from other distributed

file systems are significant. It is highly fault-tolerant and is designed to be deployed on low-cost

hardware. It provides high throughput access to application data and is suitable for applications

having large datasets.

Apart from the above-mentioned two core components, Hadoop framework also includes the

following two modules −

Hadoop Common − These are Java libraries and utilities required by other Hadoop

modules.

Hadoop YARN − This is a framework for job scheduling and cluster resource

management.

How Does Hadoop Work?

It is quite expensive to build bigger servers with heavy configurations that handle large scale

processing, but as an alternative, you can tie together many commodity computers with single-

CPU, as a single functional distributed system and practically, the clustered machines can read

the dataset in parallel and provide a much higher throughput. Moreover, it is cheaper than one

high-end server. So this is the first motivational factor behind using Hadoop that it runs across

clustered and low-cost machines.

Hadoop runs code across a cluster of computers. This process includes the following core tasks

that Hadoop performs −

Data is initially divided into directories and files. Files are divided into uniform sized

blocks of 128M and 64M (preferably 128M).

These files are then distributed across various cluster nodes for further processing.

HDFS, being on top of the local file system, supervises the processing.

Blocks are replicated for handling hardware failure.

Checking that the code was executed successfully.

Performing the sort that takes place between the map and reduce stages.

Sending the sorted data to a certain computer.

Writing the debugging logs for each job.

Advantages of Hadoop

Hadoop framework allows the user to quickly write and test distributed systems. It is

efficient, and it automatic distributes the data and work across the machines and in turn,

utilizes the underlying parallelism of the CPU cores.

BIG DATA ANALYTICS

22

Hadoop does not rely on hardware to provide fault-tolerance and high availability (FTHA),

rather Hadoop library itself has been designed to detect and handle failures at the

application layer.

Servers can be added or removed from the cluster dynamically and Hadoop continues to

operate without interruption.

Another big advantage of Hadoop is that apart from being open source, it is compatible on

all the platforms since it is Java based.

Processing Data with Hadoop - Managing Resources and Applications with Hadoop YARN

Yarn divides the task on resource management and job scheduling/monitoring into separate

daemons. There is one ResourceManager and per-application ApplicationMaster. An application

can be either a job or a DAG of jobs.

The ResourceManger have two components – Scheduler and AppicationManager.

The scheduler is a pure scheduler i.e. it does not track the status of running application. It only

allocates resources to various competing applications. Also, it does not restart the job after failure

due to hardware or application failure. The scheduler allocates the resources based on an abstract

notion of a container. A container is nothing but a fraction of resources like CPU, memory, disk,

network etc.

Following are the tasks of ApplicationManager:-

Accepts submission of jobs by client.

Negotaites first container for specific ApplicationMaster.

Restarts the container after application failure.

Below are the responsibilities of ApplicationMaster

Negotiates containers from Scheduler

Tracking container status and monitoring its progress.

Yarn supports the concept of Resource Reservation via Reservation System. In this, a user can fix

a number of resources for execution of a particular job over time and temporal constraints. The

Reservation System makes sure that the resources are available to the job until its completion. It

also performs admission control for reservation.

Yarn can scale beyond a few thousand nodes via Yarn Federation. YARN Federation allows to

wire multiple sub-cluster into the single massive cluster. We can use many independent clusters

together for a single large job. It can be used to achieve a large scale system.

Let us summarize how Hadoop works step by step:

Input data is broken into blocks of size 128 Mb and then blocks are moved to different nodes.

Once all the blocks of the data are stored on data-nodes, the user can process the data.

Resource Manager then schedules the program (submitted by the user) on individual nodes.

Once all the nodes process the data, the output is written back to HDFS.

BIG DATA ANALYTICS

23

Interacting with Hadoop Ecosystem

Hadoop Ecosystem Hadoop has an ecosystem that has evolved from its three core components

processing, resource management, and storage. In this topic, you will learn the components of the

Hadoop ecosystem and how they perform their roles during Big Data processing. The

Hadoop ecosystem is continuously growing to meet the needs of Big Data. It comprises the

following twelve components:

HDFS(Hadoop Distributed file system)

HBase

Sqoop

Flume

Spark

Hadoop MapReduce

Pig

Impala

Hive

Cloudera Search

Oozie

Hue.

Let us understand the role of each component of the Hadoop ecosystem.

Components of Hadoop Ecosystem

Let us start with the first component HDFS of Hadoop Ecosystem.

HDFS (HADOOP DISTRIBUTED FILE SYSTEM)

HDFS is a storage layer for Hadoop.

HDFS is suitable for distributed storage and processing, that is, while the data is being

stored, it first gets distributed and then it is processed.

HDFS provides Streaming access to file system data.

HDFS provides file permission and authentication.

HDFS uses a command line interface to interact with Hadoop.

So what stores data in HDFS? It is the HBase which stores data in HDFS.

HBase

HBase is a NoSQL database or non-relational database .

HBase is important and mainly used when you need random, real-time, read, or write

access to your Big Data.

It provides support to a high volume of data and high throughput.

In an HBase, a table can have thousands of columns.

BIG DATA ANALYTICS

24

UNIT-III

INTRODUCTION TO MONGODB AND MAPREDUCE PROGRAMMING

MongoDB is a cross-platform, document-oriented database that provides, high performance, high

availability, and easy scalability. MongoDB works on concept of collection and document.

Database

Database is a physical container for collections. Each database gets its own set of files on the file

system. A single MongoDB server typically has multiple databases.

Collection

Collection is a group of MongoDB documents. It is the equivalent of an RDBMS table. A

collection exists within a single database. Collections do not enforce a schema. Documents within

a collection can have different fields. Typically, all documents in a collection are of similar or

related purpose.

Document

A document is a set of key-value pairs. Documents have dynamic schema. Dynamic schema means

that documents in the same collection do not need to have the same set of fields or structure, and

common fields in a collection's documents may hold different types of data.

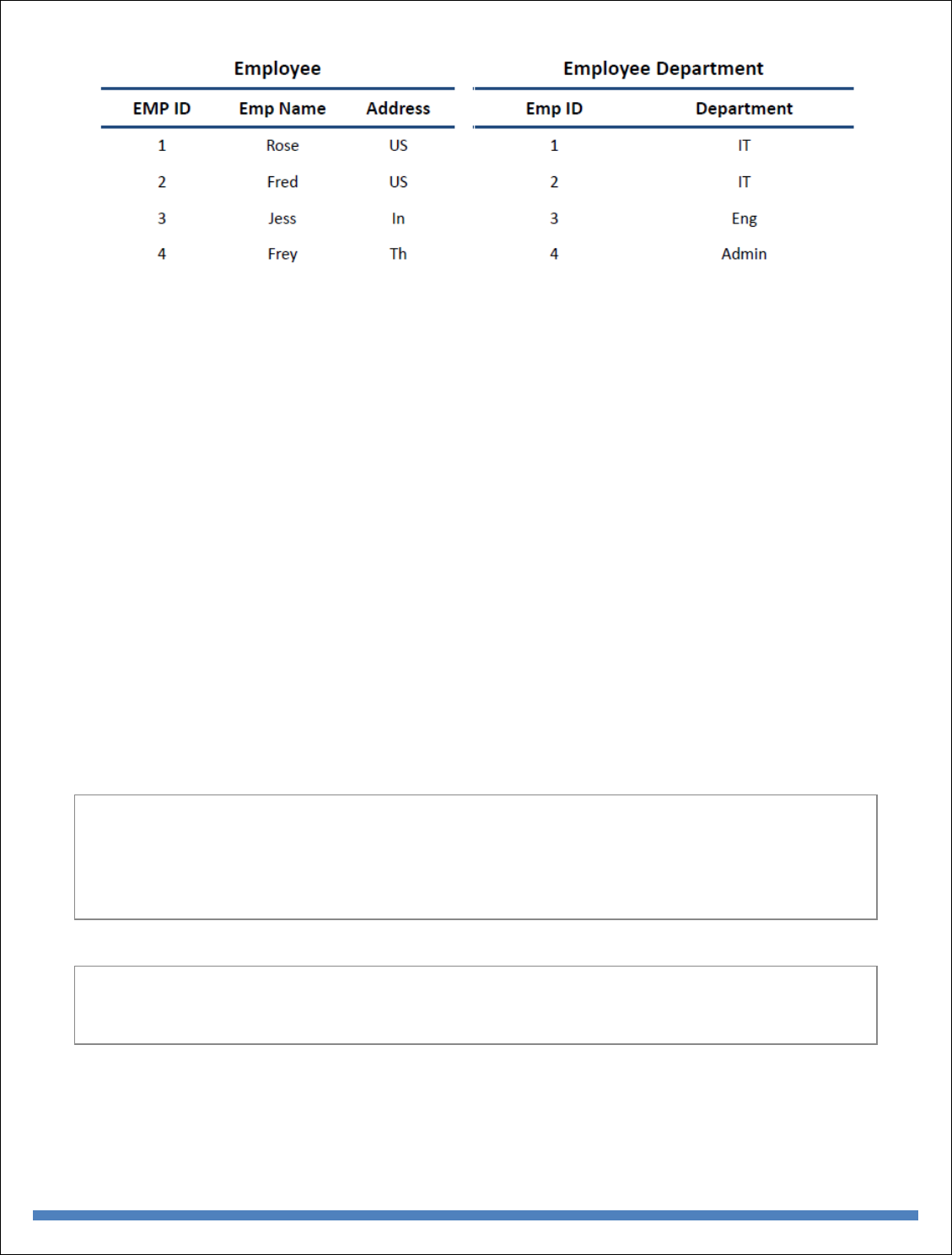

The following table shows the relationship of RDBMS terminology with MongoDB.

RDBMS

MongoDB

Database

Database

Table

Collection

Tuple/Row

Document

column

Field

Table Join

Embedded Documents

BIG DATA ANALYTICS

25

Primary Key

Primary Key (Default key _id provided by

MongoDB itself)

Database Server and Client

mysqld/Oracle

mongod

mysql/sqlplus

mongo

Sample Document

Following example shows the document structure of a blog site, which is simply a comma

separated key value pair.

{

_id: ObjectId(7df78ad8902c)

title: 'MongoDB Overview',

description: 'MongoDB is no sql database',

by: 'tutorials point',

url: 'http://www.tutorialspoint.com',

tags: ['mongodb', 'database', 'NoSQL'],

likes: 100,

comments: [

{

user:'user1',

message: 'My first comment',

dateCreated: new Date(2011,1,20,2,15),

like: 0

BIG DATA ANALYTICS

26

},

{

user:'user2',

message: 'My second comments',

dateCreated: new Date(2011,1,25,7,45),

like: 5

}

]

}

_id is a 12 bytes hexadecimal number which assures the uniqueness of every document. You can

provide _id while inserting the document. If you don’t provide then MongoDB provides a unique

id for every document. These 12 bytes first 4 bytes for the current timestamp, next 3 bytes for

machine id, next 2 bytes for process id of MongoDB server and remaining 3 bytes are simple

incremental VALUE.

Any relational database has a typical schema design that shows number of tables and the

relationship between these tables. While in MongoDB, there is no concept of relationship.

Advantages of MongoDB over RDBMS

Schema less − MongoDB is a document database in which one collection holds different

documents. Number of fields, content and size of the document can differ from one

document to another.

Structure of a single object is clear.

No complex joins.

Deep query-ability. MongoDB supports dynamic queries on documents using a document-

based query language that's nearly as powerful as SQL.

Tuning.

Ease of scale-out − MongoDB is easy to scale.

Conversion/mapping of application objects to database objects not needed.

Uses internal memory for storing the (windowed) working set, enabling faster access of

data.

BIG DATA ANALYTICS

27

Why Use MongoDB?

Document Oriented Storage − Data is stored in the form of JSON style documents.

Index on any attribute

Replication and high availability

Auto-Sharding

Rich queries

Fast in-place updates

Professional support by MongoDB

Where to Use MongoDB?

Big Data

Content Management and Delivery

Mobile and Social Infrastructure

User Data Management

Data Hub

MongoDB supports many datatypes. Some of them are −

String − This is the most commonly used datatype to store the data. String in MongoDB

must be UTF-8 valid.

Integer − This type is used to store a numerical value. Integer can be 32 bit or 64 bit

depending upon your server.

Boolean − This type is used to store a boolean (true/ false) value.

Double − This type is used to store floating point values.

Min/ Max keys − This type is used to compare a value against the lowest and highest

BSON elements.

Arrays − This type is used to store arrays or list or multiple values into one key.

Timestamp − ctimestamp. This can be handy for recording when a document has been

modified or added.

Object − This datatype is used for embedded documents.

Null − This type is used to store a Null value.

Symbol − This datatype is used identically to a string; however, it's generally reserved for

languages that use a specific symbol type.

Date − This datatype is used to store the current date or time in UNIX time format. You

can specify your own date time by creating object of Date and passing day, month, year

into it.

Object ID − This datatype is used to store the document’s ID.

BIG DATA ANALYTICS

28

Binary data − This datatype is used to store binary data.

Code − This datatype is used to store JavaScript code into the document.

Regular expression − This datatype is used to store regular expression.

The find() Method

To query data from MongoDB collection, you need to use MongoDB's find() method.

Syntax

The basic syntax of find() method is as follows −

>db.COLLECTION_NAME.find()

find() method will display all the documents in a non-structured way.

Example

Assume we have created a collection named mycol as −

> use sampleDB

switched to db sampleDB

> db.createCollection("mycol")

{ "ok" : 1 }

>

And inserted 3 documents in it using the insert() method as shown below −

> db.mycol.insert([

{

title: "MongoDB Overview",

description: "MongoDB is no SQL database",

by: "tutorials point",

url: "http://www.tutorialspoint.com",

tags: ["mongodb", "database", "NoSQL"],

likes: 100

},

{

title: "NoSQL Database",

description: "NoSQL database doesn't have tables",

by: "tutorials point",

url: "http://www.tutorialspoint.com",

tags: ["mongodb", "database", "NoSQL"],

likes: 20,

comments: [

BIG DATA ANALYTICS

29

{

user:"user1",

message: "My first comment",

dateCreated: new Date(2013,11,10,2,35),

like: 0

}

]

}

])

Following method retrieves all the documents in the collection −

> db.mycol.find()

{ "_id" : ObjectId("5dd4e2cc0821d3b44607534c"), "title" : "MongoDB Overview", "description"

: "MongoDB is no SQL database", "by" : "tutorials point", "url" : "http://www.tutorialspoint.com",

"tags" : [ "mongodb", "database", "NoSQL" ], "likes" : 100 }

{ "_id" : ObjectId("5dd4e2cc0821d3b44607534d"), "title" : "NoSQL Database", "description" :

"NoSQL database doesn't have tables", "by" : "tutorials point", "url" :

"http://www.tutorialspoint.com", "tags" : [ "mongodb", "database", "NoSQL" ], "likes" : 20,

"comments" : [ { "user" : "user1", "message" : "My first comment", "dateCreated" :

ISODate("2013-12-09T21:05:00Z"), "like" : 0 } ] }

>

The pretty() Method

To display the results in a formatted way, you can use pretty() method.

Syntax

>db.COLLECTION_NAME.find().pretty()

Example

Following example retrieves all the documents from the collection named mycol and arranges

them in an easy-to-read format.

> db.mycol.find().pretty()

{

"_id" : ObjectId("5dd4e2cc0821d3b44607534c"),

"title" : "MongoDB Overview",

"description" : "MongoDB is no SQL database",

"by" : "tutorials point",

"url" : "http://www.tutorialspoint.com",

"tags" : [

"mongodb",

"database",

"NoSQL"

],

"likes" : 100

BIG DATA ANALYTICS

30

}

{

"_id" : ObjectId("5dd4e2cc0821d3b44607534d"),

"title" : "NoSQL Database",

"description" : "NoSQL database doesn't have tables",

"by" : "tutorials point",

"url" : "http://www.tutorialspoint.com",

"tags" : [

"mongodb",

"database",

"NoSQL"

],

"likes" : 20,

"comments" : [

{

"user" : "user1",

"message" : "My first comment",

"dateCreated" : ISODate("2013-12-09T21:05:00Z"),

"like" : 0

}

]

}

The findOne() method

Apart from the find() method, there is findOne() method, that returns only one document.

Syntax

>db.COLLECTIONNAME.findOne()

Example

Following example retrieves the document with title MongoDB Overview.

> db.mycol.findOne({title: "MongoDB Overview"})

{

"_id" : ObjectId("5dd6542170fb13eec3963bf0"),

"title" : "MongoDB Overview",

"description" : "MongoDB is no SQL database",

"by" : "tutorials point",

"url" : "http://www.tutorialspoint.com",

"tags" : [

"mongodb",

"database",

"NoSQL"

],

"likes" : 100

BIG DATA ANALYTICS

31

}

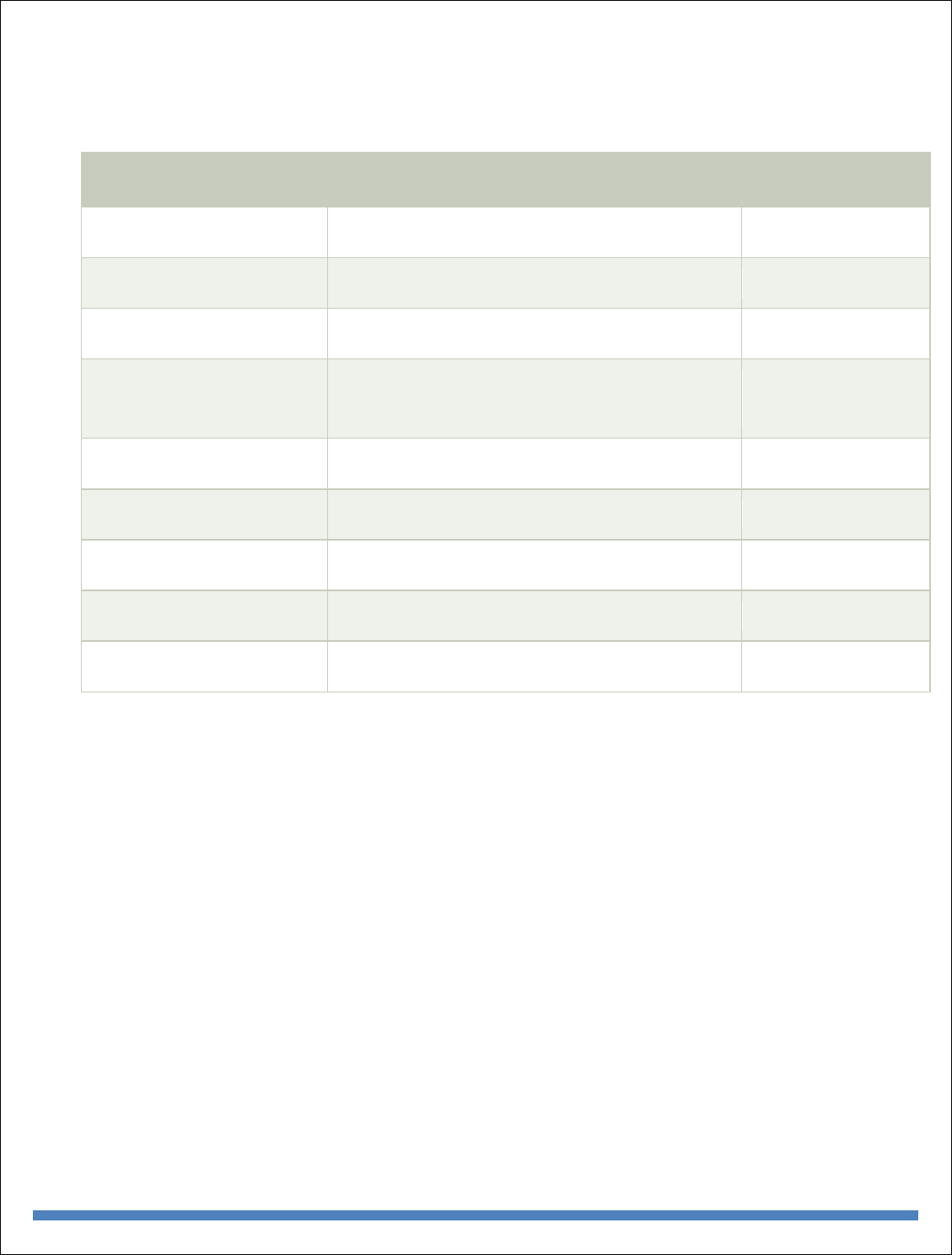

RDBMS Where Clause Equivalents in MongoDB

To query the document on the basis of some condition, you can use following operations.

Operation

Syntax

Example

RDBMS

Equivalent

Equality

{<key>:{$eg;<value>}}

db.mycol.find({"by":"tutorials

point"}).pretty()

where by =

'tutorials

point'

Less Than

{<key>:{$lt:<value>}}

db.mycol.find({"likes":{$lt:50}}).pretty()

where likes

< 50

Less Than

Equals

{<key>:{$lte:<value>}}

db.mycol.find({"likes":{$lte:50}}).pretty()

where likes

<= 50

Greater

Than

{<key>:{$gt:<value>}}

db.mycol.find({"likes":{$gt:50}}).pretty()

where likes

> 50

Greater

Than

Equals

{<key>:{$gte:<value>}}

db.mycol.find({"likes":{$gte:50}}).pretty()

where likes

>= 50

Not

Equals

{<key>:{$ne:<value>}}

db.mycol.find({"likes":{$ne:50}}).pretty()

where likes

!= 50

Values in

an array

{<key>:{$in:[<value1>,

<value2>,……<valueN>]}}

db.mycol.find({"name":{$in:["Raj",

"Ram", "Raghu"]}}).pretty()

Where

name

matches

any of the

value in

:["Raj",

"Ram",

"Raghu"]

BIG DATA ANALYTICS

32

Values not

in an array

{<key>:{$nin:<value>}}

db.mycol.find({"name":{$nin:["Ramu",

"Raghav"]}}).pretty()

Where

name

values is

not in the

array

:["Ramu",

"Raghav"]

or, doesn’t

exist at all

AND in MongoDB

Syntax

To query documents based on the AND condition, you need to use $and keyword. Following is

the basic syntax of AND −

>db.mycol.find({ $and: [ {<key1>:<value1>}, { <key2>:<value2>} ] })

Example

Following example will show all the tutorials written by 'tutorials point' and whose title is

'MongoDB Overview'.

> db.mycol.find({$and:[{"by":"tutorials point"},{"title": "MongoDB Overview"}]}).pretty()

{

"_id" : ObjectId("5dd4e2cc0821d3b44607534c"),

"title" : "MongoDB Overview",

"description" : "MongoDB is no SQL database",

"by" : "tutorials point",

"url" : "http://www.tutorialspoint.com",

"tags" : [

"mongodb",

"database",

"NoSQL"

],

"likes" : 100

}

>

For the above given example, equivalent where clause will be ' where by = 'tutorials point'

AND title = 'MongoDB Overview' '. You can pass any number of key, value pairs in find clause.

OR in MongoDB

Syntax

BIG DATA ANALYTICS

33

To query documents based on the OR condition, you need to use $or keyword. Following is the

basic syntax of OR −

>db.mycol.find(

{

$or: [

{key1: value1}, {key2:value2}

]

}

).pretty()

Example

Following example will show all the tutorials written by 'tutorials point' or whose title is

'MongoDB Overview'.

>db.mycol.find({$or:[{"by":"tutorials point"},{"title": "MongoDB Overview"}]}).pretty()

{

"_id": ObjectId(7df78ad8902c),

"title": "MongoDB Overview",

"description": "MongoDB is no sql database",

"by": "tutorials point",

"url": "http://www.tutorialspoint.com",

"tags": ["mongodb", "database", "NoSQL"],

"likes": "100"

}

>

Using AND and OR Together

Example

The following example will show the documents that have likes greater than 10 and whose title

is either 'MongoDB Overview' or by is 'tutorials point'. Equivalent SQL where clause is 'where

likes>10 AND (by = 'tutorials point' OR title = 'MongoDB Overview')'

>db.mycol.find({"likes": {$gt:10}, $or: [{"by": "tutorials point"},

{"title": "MongoDB Overview"}]}).pretty()

{

"_id": ObjectId(7df78ad8902c),

"title": "MongoDB Overview",

"description": "MongoDB is no sql database",

"by": "tutorials point",

"url": "http://www.tutorialspoint.com",

"tags": ["mongodb", "database", "NoSQL"],

"likes": "100"

}

>

BIG DATA ANALYTICS

34

NOR in MongoDB

Syntax

To query documents based on the NOT condition, you need to use $not keyword. Following is

the basic syntax of NOT −

>db.COLLECTION_NAME.find(

{

$not: [

{key1: value1}, {key2:value2}

]

}

)

Example

Assume we have inserted 3 documents in the collection empDetails as shown below −

db.empDetails.insertMany(

[

{

First_Name: "Radhika",

Last_Name: "Sharma",

Age: "26",

e_mail: "radhika_sharma.123@gmail.com",

phone: "9000012345"

},

{

First_Name: "Rachel",

Last_Name: "Christopher",

Age: "27",

e_mail: "Rachel_Christopher.123@gmail.com",

phone: "9000054321"

},

{

First_Name: "Fathima",

Last_Name: "Sheik",

Age: "24",

e_mail: "Fathima_Sheik.123@gmail.com",

phone: "9000054321"

}

]

)

Following example will retrieve the document(s) whose first name is not "Radhika" and last name

is not "Christopher"

> db.empDetails.find(

{

BIG DATA ANALYTICS

35

$nor:[

40

{"First_Name": "Radhika"},

{"Last_Name": "Christopher"}

]

}

).pretty()

{

"_id" : ObjectId("5dd631f270fb13eec3963bef"),

"First_Name" : "Fathima",

"Last_Name" : "Sheik",

"Age" : "24",

"e_mail" : "Fathima_Sheik.123@gmail.com",

"phone" : "9000054321"

}

NOT in MongoDB

Syntax

To query documents based on the NOT condition, you need to use $not keyword following is the

basic syntax of NOT −

>db.COLLECTION_NAME.find(

{

$NOT: [

{key1: value1}, {key2:value2}

]

}

).pretty()

Example

Following example will retrieve the document(s) whose age is not greater than 25

> db.empDetails.find( { "Age": { $not: { $gt: "25" } } } )

{

"_id" : ObjectId("5dd6636870fb13eec3963bf7"),

"First_Name" : "Fathima",

"Last_Name" : "Sheik",

"Age" : "24",

"e_mail" : "Fathima_Sheik.123@gmail.com",

"phone" : "9000054321"

}

BIG DATA ANALYTICS

36

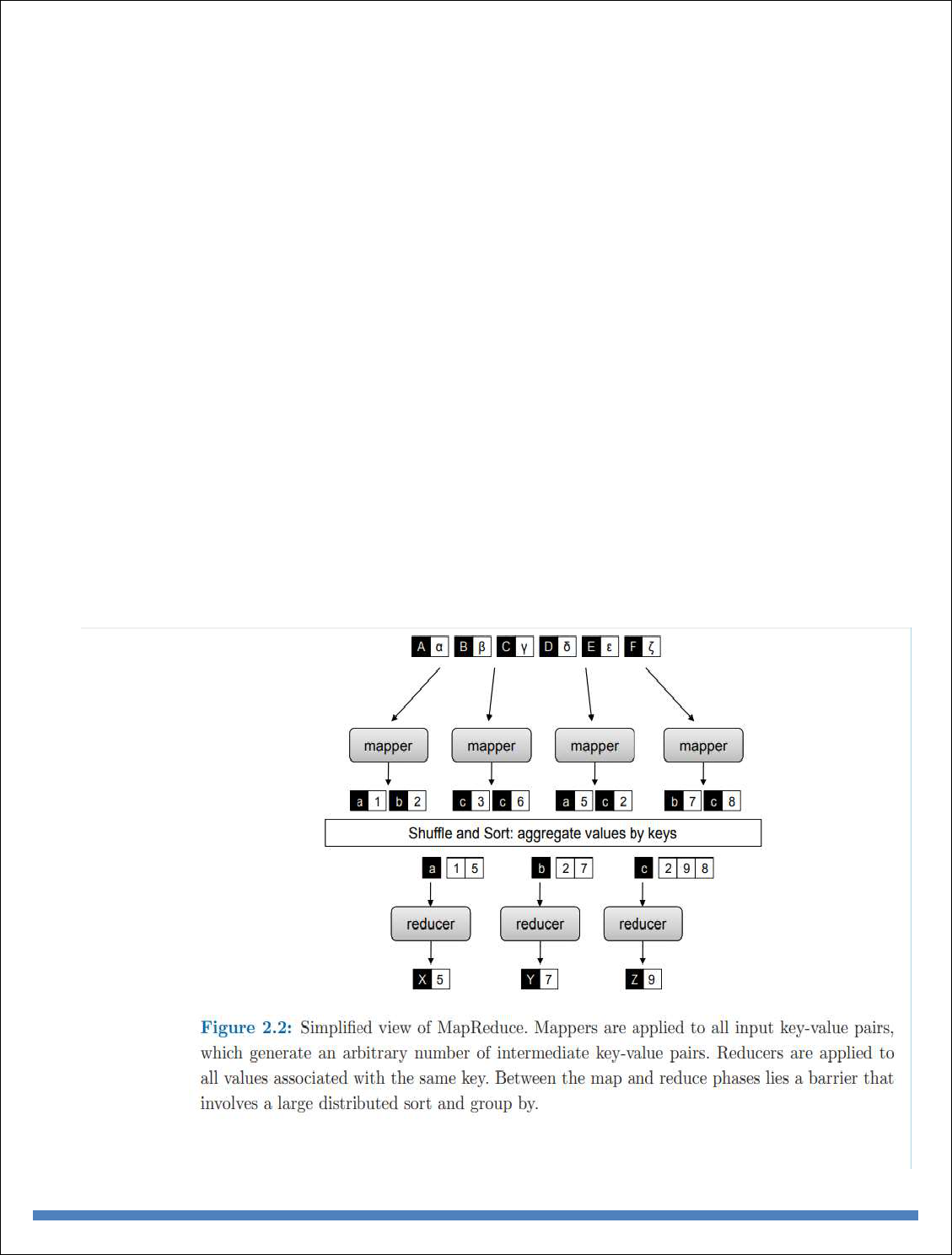

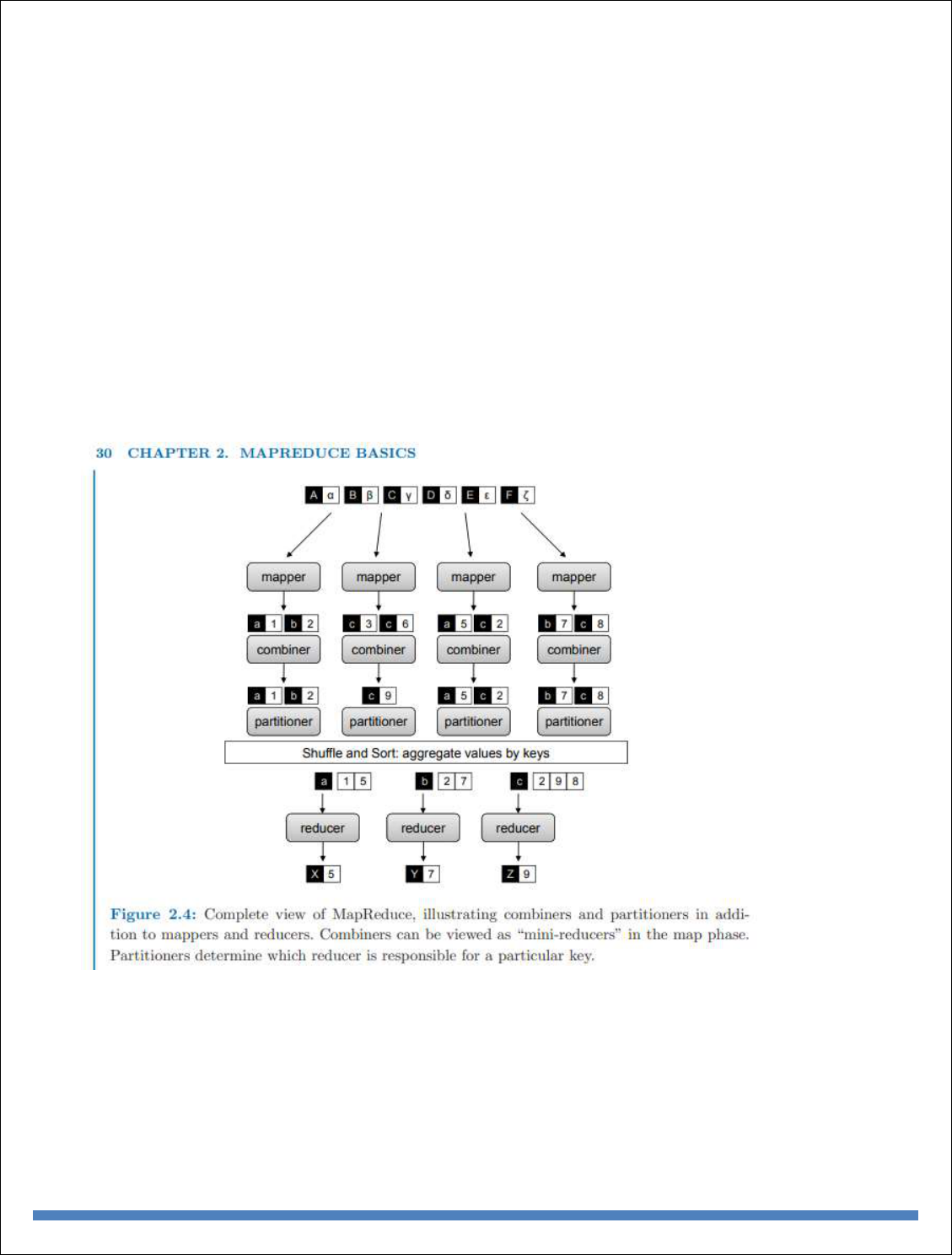

MapReduce:

MapReduce addresses the challenges of distributed programming by providing an abstraction that

isolates the developer from system-level details (e.g., locking of data structures, data starvation

issues in the processing pipeline, etc.). The programming model specifies simple and well-defined

interfaces between a small number of components, and therefore is easy for the programmer to

reason about. MapReduce maintains a separation of what computations are to be performed and

how those computations are actually carried out on a cluster of machines. The first is under the

control of the programmer, while the second is exclusively the responsibility of the execution

framework or “runtime”. The advantage is that the execution framework only needs to be designed

once and verified for correctness—thereafter, as long as the developer expresses computations in

the programming model, code is guaranteed to behave as expected. The upshot is that the developer

is freed from having to worry about system-level details (e.g., no more debugging race conditions

and addressing lock contention) and can instead focus on algorithm or application design.

ich often has multiple cores). Why is MapReduce important? In practical terms, it provides a very

effective tool for tackling large-data problems. But beyond that, MapReduce is important in how

it has changed the way we organize computations at a massive scale. MapReduce represents the

first widely-adopted step away from the von Neumann model that has served as the foundation of

computer science over the last half plus century. Valiant called this a bridging model [148], a